Your iPhone is on the iOS 16 device compatibility list, but some features won’t work nonetheless? The following nine new features in iOS 16 require at least iPhone XS.

9 features in iOS 16 that require at least iPhone XS/XR

iOS 16 is packed with great features and a bunch of delightful little improvements, but not all of them work on every iPhone that’s capable of running iOS 16.

We’ve counted nine specific areas of improvement in iOS 16 that require at least the A12 Bionic chip found in the iPhone XS and iPhone XR, phones made in 2018.

Moreover, iOS 16 also includes two iPhone 13-exclusive features as well as two features for iPhones and iPads with LiDAR. So, if you’re still holding onto that rusty old iPhone X, iPhone 8 or iPhone 8 Plus, now is the right time to upgrade.

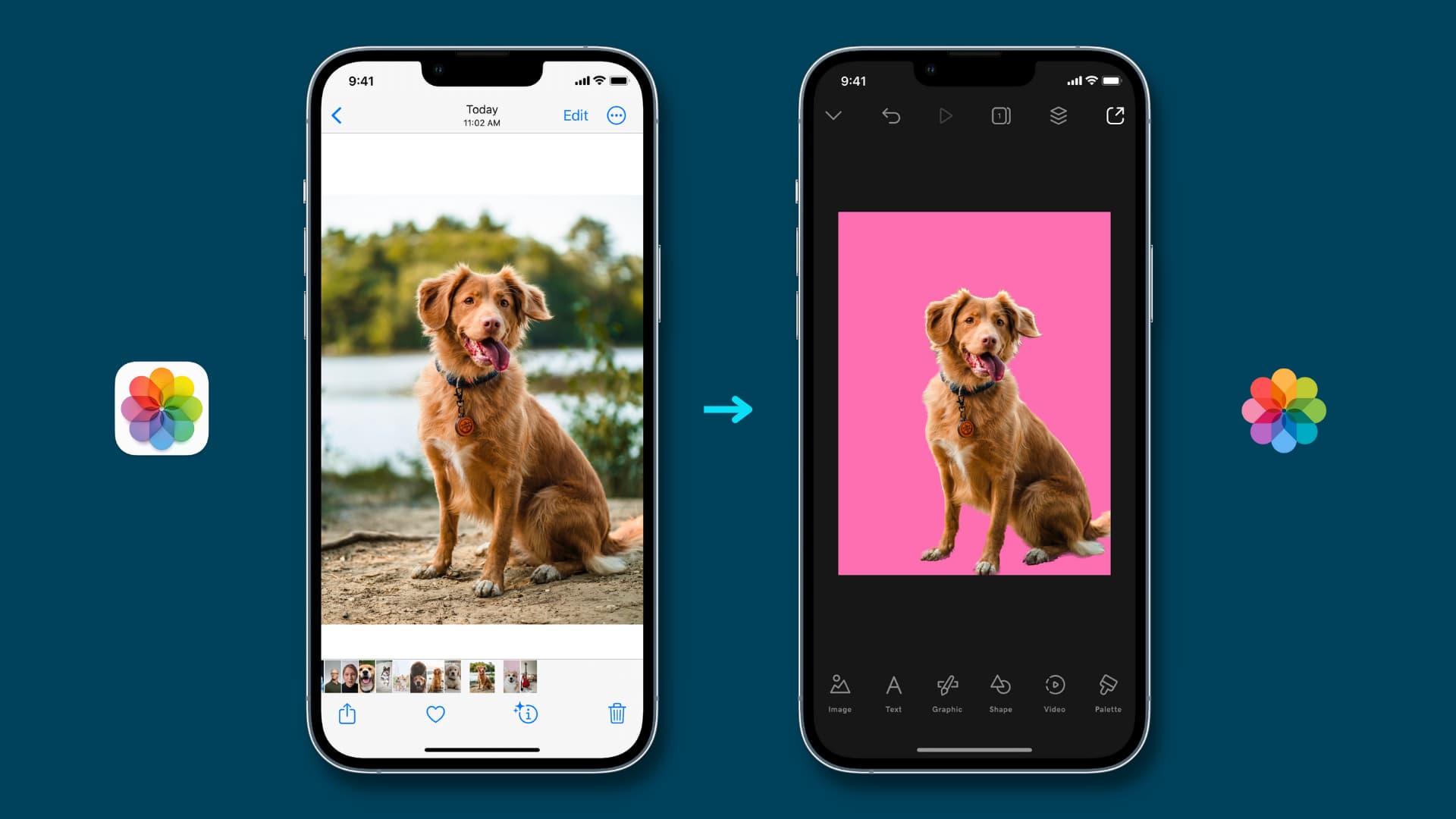

1. Removing a photo’s subject from its background

In iOS 16’s Photos app, Safari, Quick Look and elsewhere, you can lift a photo’s subject from its background by touching and holding it. This feature requires at least the A12 Bionic chip (iPhone XS, iPhone XR)

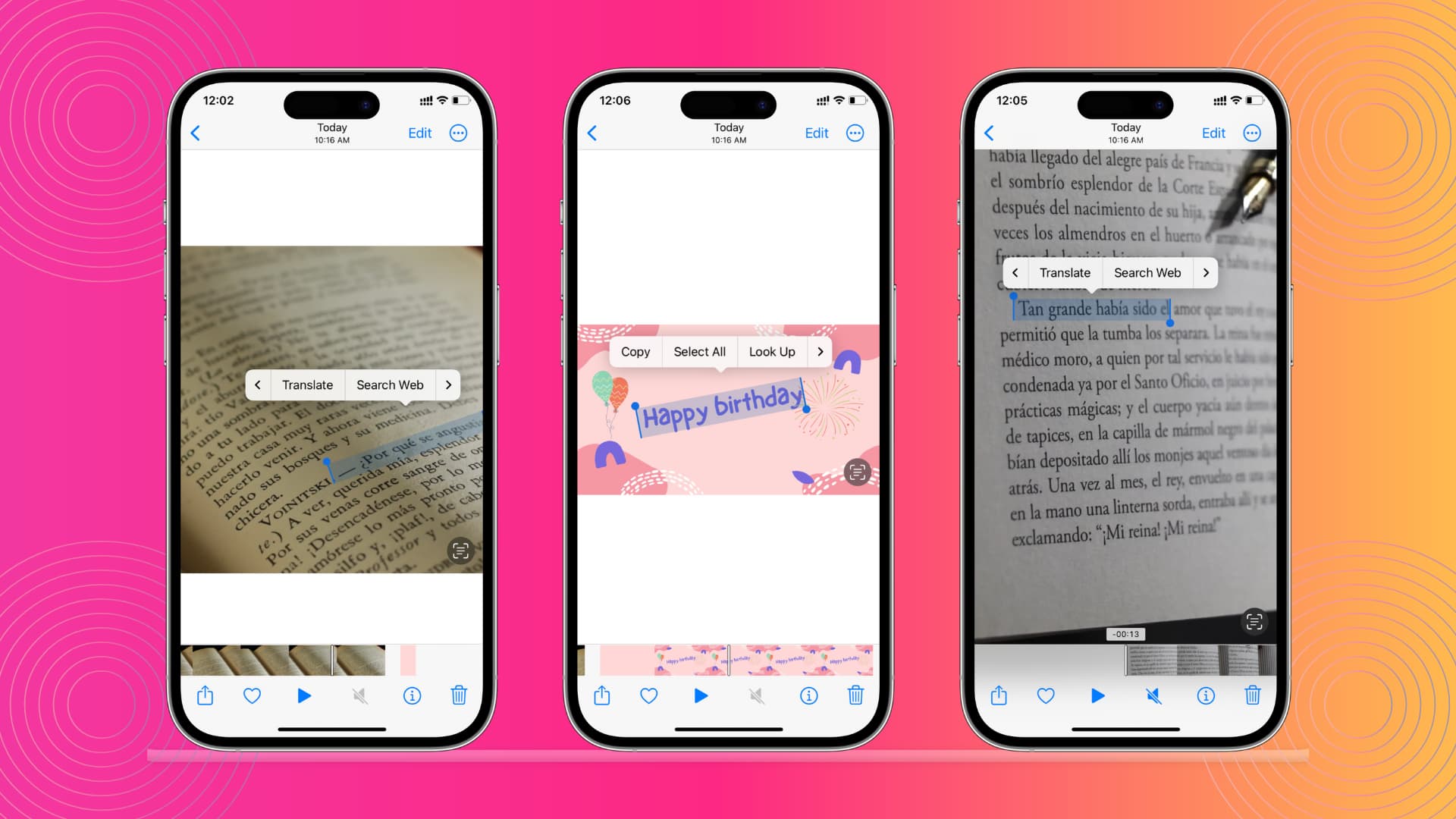

2. Live Text for video

The impressive Live Text feature was improved in several ways in iOS 16. And one of the best examples of that is the ability to copy text from any video.

Simply pause a video and instantly you can do things like copy text, translate, look up, share and more. This is great for, say, copying recipes or code examples or whole lectures from videos. But you’ll need an iPhone XS or newer to grab text from video.

Another consideration: this feature is currently restricted to English, Chinese, French, Italian, German, Japanese, Korean, Portuguese, Spanish and Ukrainian text. Read: How to turn off Live Text on iPhone, iPad and Mac

3. Quick action for Live Text quick

Live Text in iOS 16 brings support for the Quick Actions feature you already know and love from the Files app and the Mac’s Finder.

Simply select a piece of text on an image and up pops a contextual menu filled with appropriate actions. Depending on what’s selected, you can use quick actions to call phone numbers, visit websites, convert currencies, translate languages and more.

But if your iPhone was manufactured before 2018 (iPhone X and iPhone 8), you won’t see any quick actions. And like with Live Text for video, you can only perform quick actions on English, Chinese, French, Italian, German, Japanese, Korean, Portuguese, Spanish or Ukrainian text.

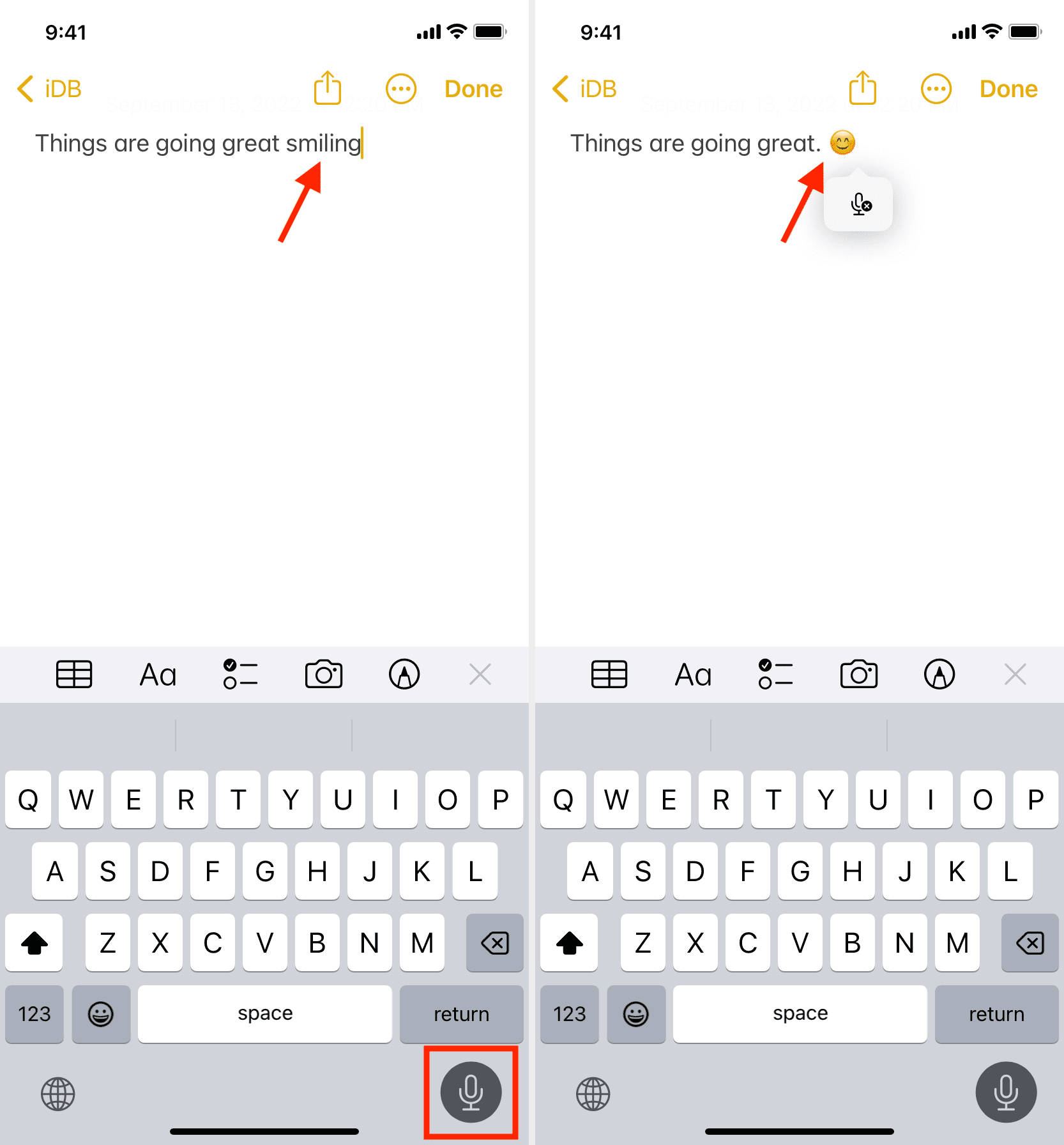

4. Emoji dictation

The Dictation feature in iOS 16 is functionally improved in several ways, including automatic punctuation and the ability to insert emoji with your voice.

Both features rely on Siri intelligence but inserting emoji with your voice only works on devices running the A12 Bionic chip or later. In other words, you’ll need to use iOS 16 on an iPhone XS or newer to take advantage of emoji support in Dictation.

5. Expanded Siri offline support

iOS 15 brought basic support for a handful of Siri offline requests such as setting alarms and timers, reading messages or toggling Wi-Fi and Bluetooth.

iOS 16 brings expanded support for requests that can be performed without an internet connection. You can now ask Siri to control your smart home devices, use the Intercom feature or access voicemail even when your iPhone is offline. You guessed right—offline Siri requests require iPhone XS or newer.

6. Inserting emoji via Siri

Emoji dictation extends to iOS 16’s Siri, which enables you to insert the right emoticon when sending messages via the smart assistant. The same caveat applies: You’ll need at least an iPhone XS to dictate emoji with Siri in your texts.

7. Siri call hang up

Siri can finally hang up the current call on your behalf. Just say “Hey Siri, hang up” during a call in the Phone or FaceTime app and the assistant will do just that.

This feature must be manually turned on in Settings but you won’t see the toggle if your iPhone isn’t powered by an A12 Bionic chip or later. So we’re talking at least an iPhone XS or otherwise this new Siri feature will be off limits to you.

8. “Hey Siri, what can I do here?”

Apple’s assistant in iOS 16 provides useful information about the features of your iPhone or installed apps. Say something along the lines of “Hey Siri, what can I do here?” will pull up an informational panel highlighting the specific iOS feature. You can also inquire about a specific app by saying “Hey Siri, what can I do with Spark?”

9. Image search in more apps

The Photos app detects scenes and objects in your photos, allowing you to search by people, scenes and so on. iOS 16 brings that smart search capability for images to Spotlight sources such as Messages, Notes and Files.

Now you can unearth images from those apps in Spotlight results when searching by locations, people, scenes or even things in the images such as text, a dog or a car. This only works with images stored locally on your device and on iPhone models equipped with A12 Bionic and later (iPhone XS and iPhone XR).

4 features in iOS 16 that require the latest hardware

Two new features in iOS 16 and iPadOS 16 require a device with Apple’s LiDAR sensor on the back, and won’t work non-LiDAR ones. And on top of that, two specific photography improvements are tied to Apple’s latest imaging hardware.

1. Detection mode in the Magnifier app

This new mode in the Magnifier app in iOS 16 combines door and people detection with image descriptions (each be used alone or simultaneously).

This creates a cool accessibility feature to help blind and low-vision users get rich descriptions of their surroundings. Detection mode relies on the presence of LiDAR technology, meaning it only works on the iPhone 12, iPhone 13 and recent iPad Pros.

2. Door detection in the Magnifier app

Another new accessibility feature for iOS 16’s Magnifier lets you locate a door, read signs or labels around it and even get instructions on how to open the door. This requires a LiDAR sensor that’s only available on Pro-branded iPhone 12 and iPhone 13, as well as newer iPad Pro models.

3. Foreground blur in portrait photos

Portrait mode in iOS 16’s Camera app allows you to blur objects in a photo’s foreground. This creates a depth‑of‑field effect that looks more realistic than before.

4. Sharper Cinematic mode

When shooting video in Cinematic mode, Apple’s signature depth‑of‑field effect is more accurate in iOS 16 for “profile angles and around the edges of hair and glasses.” But of course, you’ll need at least an iPhone 13 mini, iPhone 13, iPhone 13 Pro and iPhone 13 Pro Max to record in Cinematic mode.

Why do some iOS 16 features require newer iPhones?

The short answer is: Because there are features that require certain hardware functions, like a LiDAR sensor, or specific chips to perform well.

With iOS 16, Apple has delivered the first major update since iOS 13 that has pulled support for devices powered by its old A9 and A10 Fusion chips. Right out of the gate, that leaves the iPhone 6s and iPhone 7 series out in the cold.

In addition, iOS 16 will refuse to run on devices that lack a Neural engine. Apple debuted a Neural engine coprocessor for machine learning tasks in the A11 Bionic chip that powers its 2017 phones: iPhone X, iPhone 8 and iPhone 8 Plus.

This is to say: iOS is purpose-built for specific chipsets rather than actual devices. So it’s no wonder that some of the more sophisticated features require newer chipsets (A12 Bionic and up) than the bare minimum required to run iOS 16 (A11 Bionic). Read: Adjust these 10 settings to improve your iPhone experience