Apple this week revealed a new initiative that’s meant to help protect children. The Expanded Protections for Children effort is three-fold, each feature baked into Apple’s major platforms. Two of the new additions appear to be going over well enough, but it’s the other one, the photo scanning one, that’s ruffled a lot of feathers.

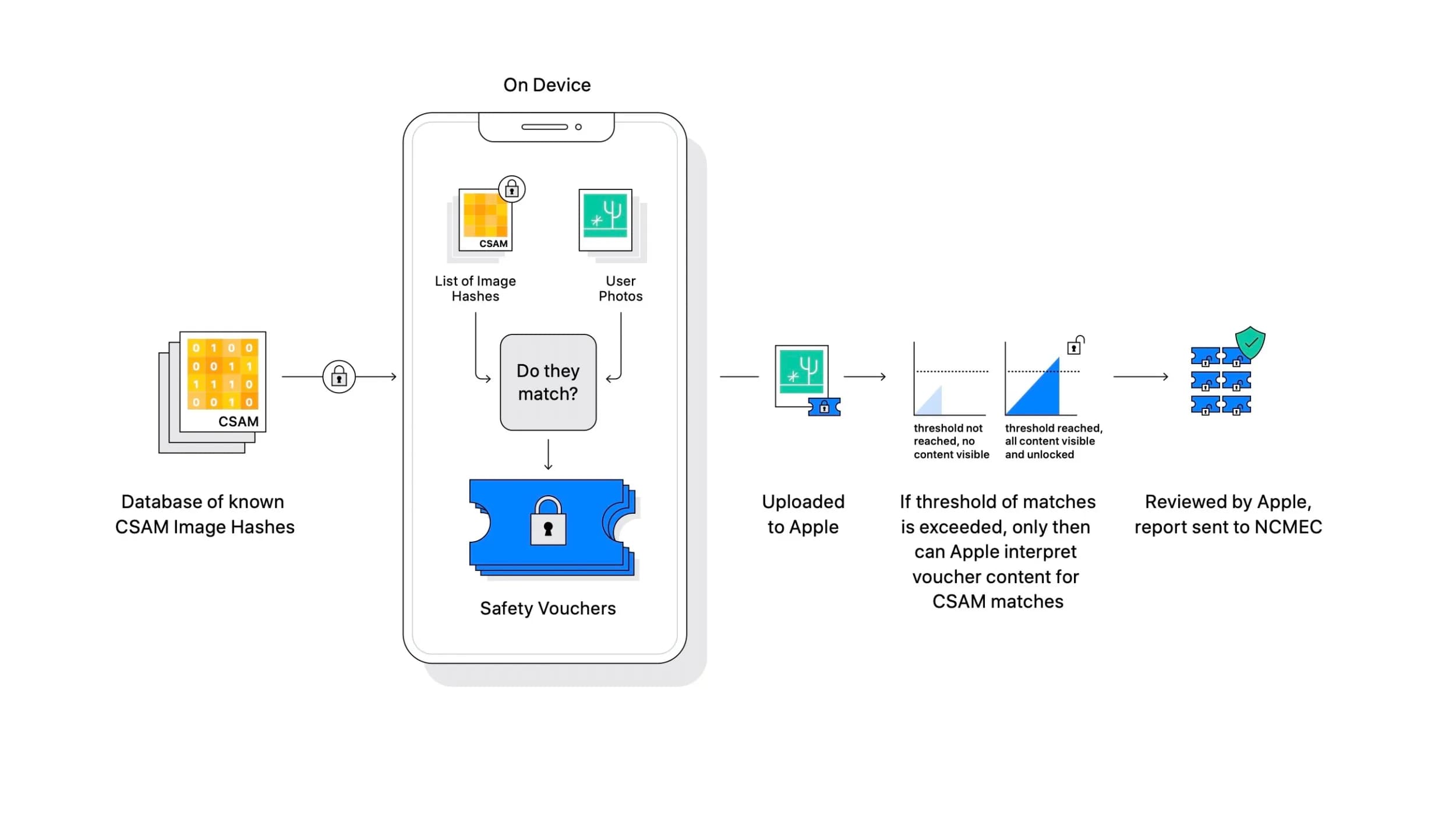

Today, Apple has provided some comments to MacRumors to address some of these complaints and issues. Namely, the photo scanning part of Expanded Protections for Children. With this feature, Apple will begin scanning iCloud Photo libraries for any known abusive and exploitative material. They will do this by relying on known abusive hashes within photos that tie directly to child sexual abuse material, or CSAM.

While Apple is implementing elements to help reduce false positives, and there’s a threshold for how many of these images must be detected before Apple will file any actions against a person, it’s still raising issues. That’s mostly related to what could come next with these features in place, how they could be turned against users, and even how some governments might try to use the feature in less-than-pristine ways.

In the comments made to the publication, Apple says that when it comes to a global expansion –which the company is currently looking into– they will take it at a case-by-case basis, or country-by-country basis. This was determined after conducting a legal review. Apple isn’t giving any specifics on what that review will look like, though.

Meanwhile, as for how some particular regions across the globe might try to exploit these new features:

Apple also addressed the hypothetical possibility of a particular region in the world deciding to corrupt a safety organization, noting that the system’s first layer of protection is a an undisclosed threshold before a user is flagged for having inappropriate imagery. Even if the threshold is exceeded, Apple said its manual review process would serve as an additional barrier and confirm the absence of known CSAM imagery. Apple said it would ultimately not report the flagged user to NCMEC or law enforcement agencies and that the system would still be working exactly as designed.

Apple reiterated its effort to use this new system as a means to detecting CSAM, and nothing more. The company did admit that there is no “silver bullet answer” when it comes to the system potentially being abused, though. So it appears even Apple understands that there’s that bit of wiggle room, so the concern here is appropriate.

Still, the company is maintaining its commitment to user privacy, and believes in this new feature, and plans on expanding on it further in the future according to an internal memo distributed to employees recently.