Renowned Apple analyst Ming-Chi Kuo of KGI Securities predicted that iPhone 8 would supplant Touch ID with advanced sensors capable of recognizing one’s face with very high accuracy. JPMorgan analyst Rod Hall corroborated Kuo’s report, speculating that facial recognition would utilize a front-facing 3D sensor.

For many people, this has been one of the tougher iPhone 8 rumors to swallow. For starters, how would iPhone 8 authenticate the user in the middle of the night? Or in complete darkness? Touch ID lets you unlock your iPhone without looking whereas facial recognition would require you to face the sensor, a major inconvenience.

A few reports have attempted to shed some light on the subject, explaining the basics of 3D motion and object scanning and how Apple might leverage 3D sensors in iPhone 8.

Stop thinking about facial recognition in terms of your iPhone’s front-facing camera.

That approach—employed by Android’s Trusted Face and Microsoft’s Windows Hello features—isn’t very reliable in low-light situations and doesn’t yield desired results in terms of the error rate.

Touch ID has the potential of a 1 in 50,000 error rate. According to Hall, biometric facial scanning in iPhone 8 would be far more accurate than Touch ID and more secure for Apple Pay while working better in wet conditions.

Touch ID, as you know, isn’t very reliable with wet fingers.

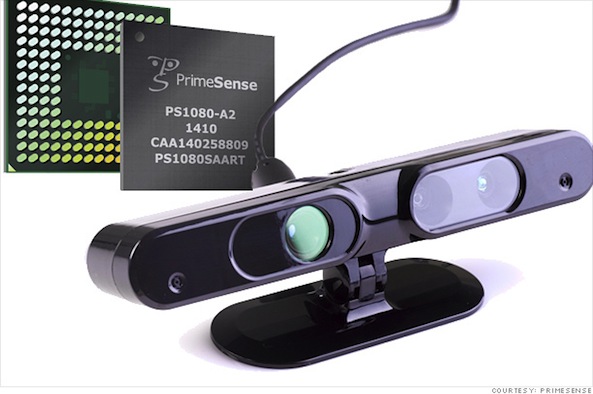

The analyst believes that iPhone 8 will feature 3D laser sensors allowing it to map its surroundings, create highly accurate 360 degree scans of real world objects and, yes, scan the user’s face—all very accurately, even in low-light situations. Apple’s sensor is widely thought to be built on the technology from Primesense, an Israeli startup Apple acquired in November 2013 for a reported $350 million.

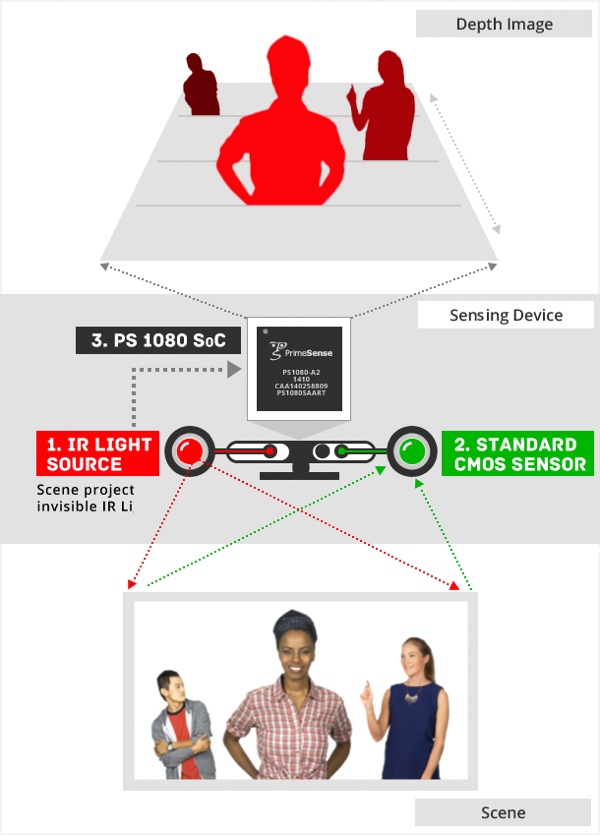

Here is Primesense technology, rated with sub-millimeter accuracy, in action.

Primesense employed approximately 150 employees in Tel Aviv and around the world.

The startup was heavily involved in the development of Microsoft’s Kinect sensor technology before the Apple deal and its sensors were used in Google’s modular smartphone, dubbed Project Tango.

A 3D sensor requires a very low power LED or laser diode to emit light, a light filter to reduce noise and a powerful image signal processor to process the data as quickly as possible.

In addition to biometric facial scanning, iPhone 8 (with the right software) could leverage Primesense technology for a bunch of other stuff, like interactive gaming, augmented reality, indoor mapping, retail, 3D scanning and printing, clothing sizing, home improvement measurements and so forth.

Juli Clover of MacRumors explained recently that Primesense uses a technique dubbed Light Coding for 3D depth sensing, which involves a near-IR light source that projects an invisible light into a room or a scene. A separate image sensor then reads the IR-light and captures it along with a series of synchronized images.

“The infrared patterns cast by the IR-light, which enable depth acquisition, are then deciphered by the company’s chips to create a virtual image of a scene or object,“ she wrote.

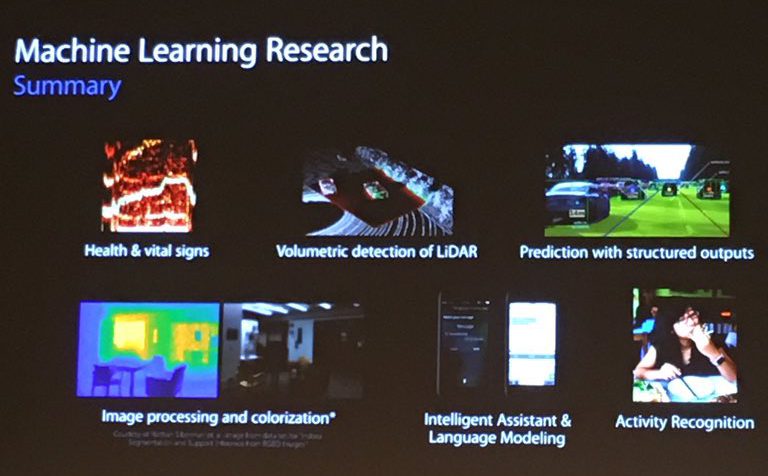

The Primesense-powered 3D laser scanning module that may make an appearance on iPhone 8 could be a miniaturized version of a LiDAR mapper or rangefinder, AppleInsider explains. For those wondering, LiDAR (or Light Detection and Ranging) is similar to radar but with lasers. The laser-based technology is being used in self-driving vehicles, for high-resolution mapping and more.

LiDAR is heavily used by the military in ordnance guidance suites, too.

The integration of the laser and the sensor was highlighted in a December presentation by Apple’s machine learning head Russ Salakhutdinov who covered Apple’s artificial intelligence research in “volumetric detection of LiDAR,” or measuring and identifying objects at-range with lasers.

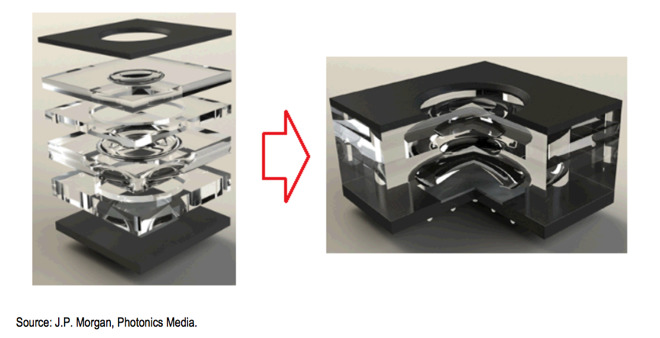

“Creating small, inexpensive, 3D scanning modules has interesting application far beyond smartphones,” wrote JPMorgan’s Hall. “Eventually we believe these sensors are likely to appear on auto-driving platforms as well as in many other use cases.”

Facial scanning would cost about $10 or $15 per iPhone 8 unit, he estimated.

iPhone 8’s 3D laser scanning module will likely rely on a wafer-level stacked optical column rather than a conventional barrel lens design.

Apple currently owns a few Primesense-related patents. As an example, a Kinect-like patent promises to bring dedicated motion-sensing hardware to Macs and Apple TVs. Another patent outlines using Primesense-like motion sensors to detect gestures that would offer limited access to select sets of iOS apps, straight from the user’s Lock screen.

Yet another patent awarded to Apple, titled “Virtual keyboard for a non-tactile three dimensional user interface,” relies on Primesense technology to let users type on a virtual 3D keyboard by simply moving their hands or fingers as the sensors would “see” the space between the user and the display.

As The Wall Street Journal explained back in November 2013, PrimeSense’s motion-sensing technology for gestural controls could be even used to improve Apple Maps. “Sooner rather than later, our phones will pull up scans of real spaces we want to visit or may be approaching,” said the report. “Those two-dimensional maps will seem very obsolete.”

In other words, Apple could theoretically utilize PrimeSense sensors in iPhone 8 to crowd-source three-dimensional representations of users’ environments. Before signing off, there’s also this patent which outlines using depth-detection LiDAR sensors for 3D imaging and facial gestures recognition.

Summing up, Primesense technology was clearly made for the brains at Apple. The question is, will this stuff be consumer-ready in time for iPhone 8?

For what it’s worth, Apple’s Chief Financial Officer Luca Maestri said earlier this week that chips and sensors are among the most “strategic” and “important” Apple investments, plus a valuable part of its intellectual property.

What’s your take on Primesense, iPhone 8 and facial scanning replacing Touch ID?

Image iDropNews