Respected journalist Steven Levy has scored another nice exclusive with a new write-up over at Backchannel, a Wired Media Group property, giving us a rare inside look at how artificial intelligence and machine learning work at Apple.

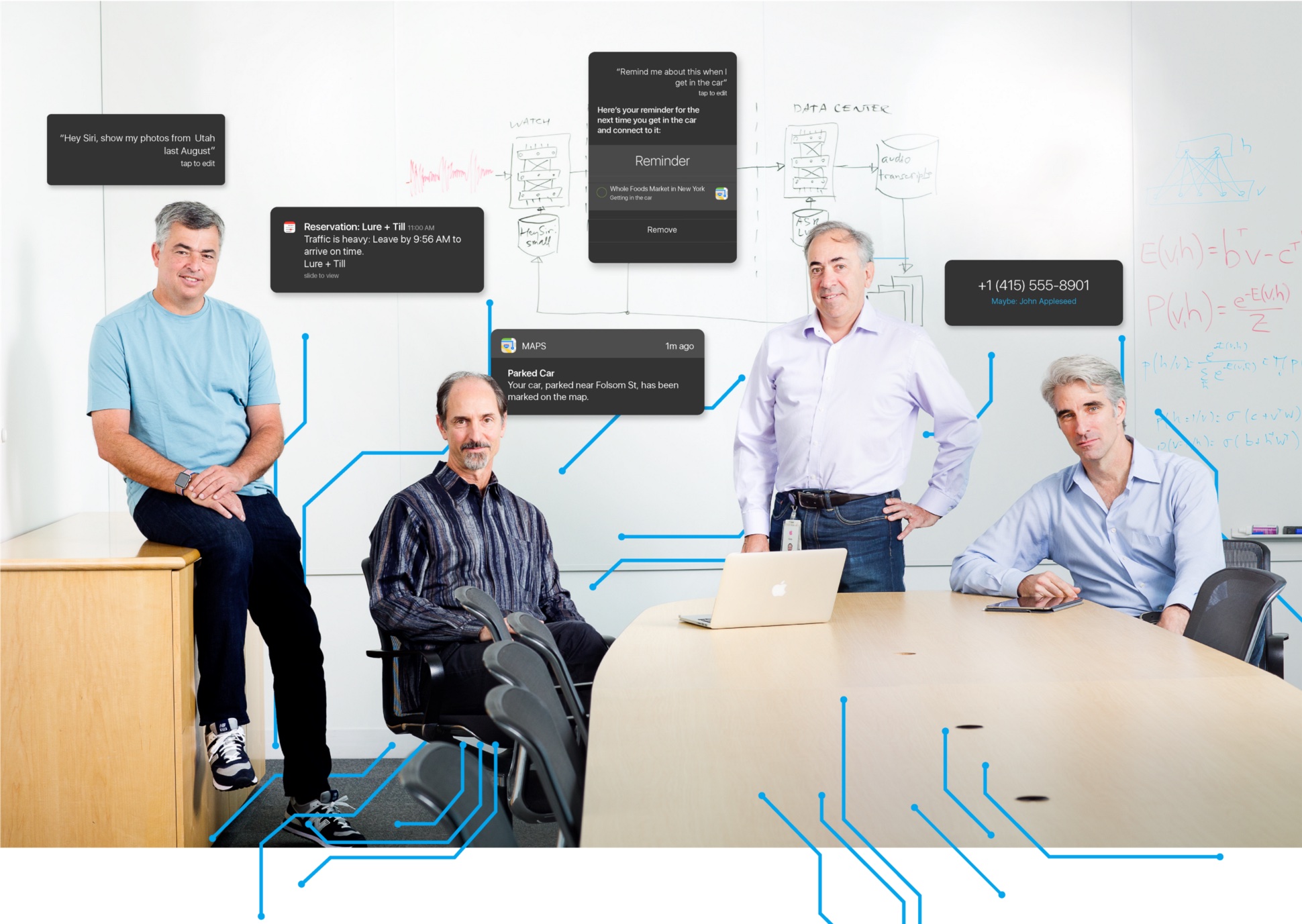

The article contains a lot of gems, with company executives Eddy Cue, Craig Federighi, Phil Schiller and Siri leads Tom Gruber and Alex Acero providing a bunch of previously unknown facts about Apple’s AI efforts, including this one: machine learning has enabled Apple to cut Siri’s error rate by a factor or two.

Welcome to The Temple of Machine Learning

Apple has “a lot” of engineers working on machine learning—some of which weren’t necessarily trained in the field before they joined Apple—and any results of their work are shared and readily available to other product teams within the company.

“We don’t have a single centralized organization that’s the Temple of Machine Learning in Apple,” says Apple’s boss of Software Engineering, Craig Federighi. “We try to keep it close to teams that need to apply it to deliver the right user experience.”

Of course some talent comes from acquisitions as Apple’s recently been buying 20 to 30 small companies a year. “We’re looking at people who have that talent but are really focused on delivering great experiences,” said Federighi.

Making Siri sound more like an actual person

Before we get to it, there are four aspects to Siri:

- Speech recognition—turning speech to text

- Natural language understanding—understanding what you’re saying

- Execution—fulfilling a query or request

- Response—talking back to you

Although Apple was still licensing much of its speech technology for Siri from a third party (likely Nuance), that’s “due for a change,” Levy writes, as Apple explores in-house speech capabilities for the digital personal assistant.

In fact, it’s deep learning that enables Siri to talk back to you more naturally while machine learning techniques smooth out recordings of individual words, making Siri responses sound more like an actual person than when it relied on Nuance.

All of that also helps with bringing Siri to your favorite apps in a way that doesn’t require you to use specific language to access third-party skills on iOS 10 and macOS Sierra.

Siri’s brain transplant

On July 30, 2014, Siri had a brain transplant.

That’s when Apple began moving Siri’s voice recognition to a neural-net based system following initial complaints from users regarding erroneously interpreted commands. They next trained the neural net for Siri using lots of data and GPUs.

“We have the biggest and baddest GPU (graphics processing unit microprocessor) farm cranking all the time,” says Siri’s Alex Acero. “And we pump lots of data.” According to Acero, Siri began using machine learning to understand user intent in November 2014, and released a version with deeper learning a year later.

By applying machine learning techniques such as deep neural networks (DNN), convolutional neural networks, long short-term memory units, gated recurrent units and n-grams, Apple was able to infuse Siri with deep learning and cut her error rate by a factor of two in all the languages.

“That’s mostly due to deep learning and the way we have optimized it — not just the algorithm itself but in the context of the whole end-to-end product,” says Acero.

The iBrain is here and with iOS 10, it’s already in your phone thanks to machine learning, artificial intelligence, the power of Apple-designed silicon and Differential Privacy techniques.

The size of Apple’s user base allowed Apple to quickly train Siri.

Siri’s head of advanced development, Tom Gruber, reflects:

Steve Jobs said you’re going to go overnight from a pilot, an app, to a hundred million users without a beta program. All of a sudden you’re going to have users. They tell you how people say things that are relevant to your app. That was the first revolution. And then the neural networks came along.

But where else does machine learning help Apple?

Apple and machine learning

Levy learned about other ways machine learning helps advance Apple’s products and services, here’s a food-for-though excerpt:

Apple uses deep learning to detect fraud on the Apple store, to extend battery life between charges on all your devices, and to help it identify the most useful feedback from thousands of reports from its beta testers. Machine learning helps Apple choose news stories for you. It determines whether Apple Watch users are exercising or simply perambulating.

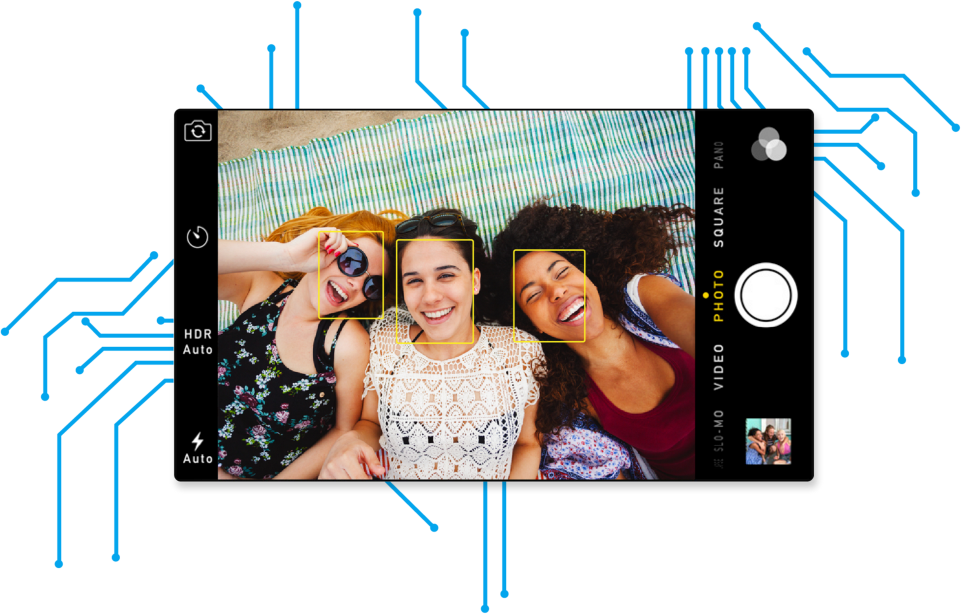

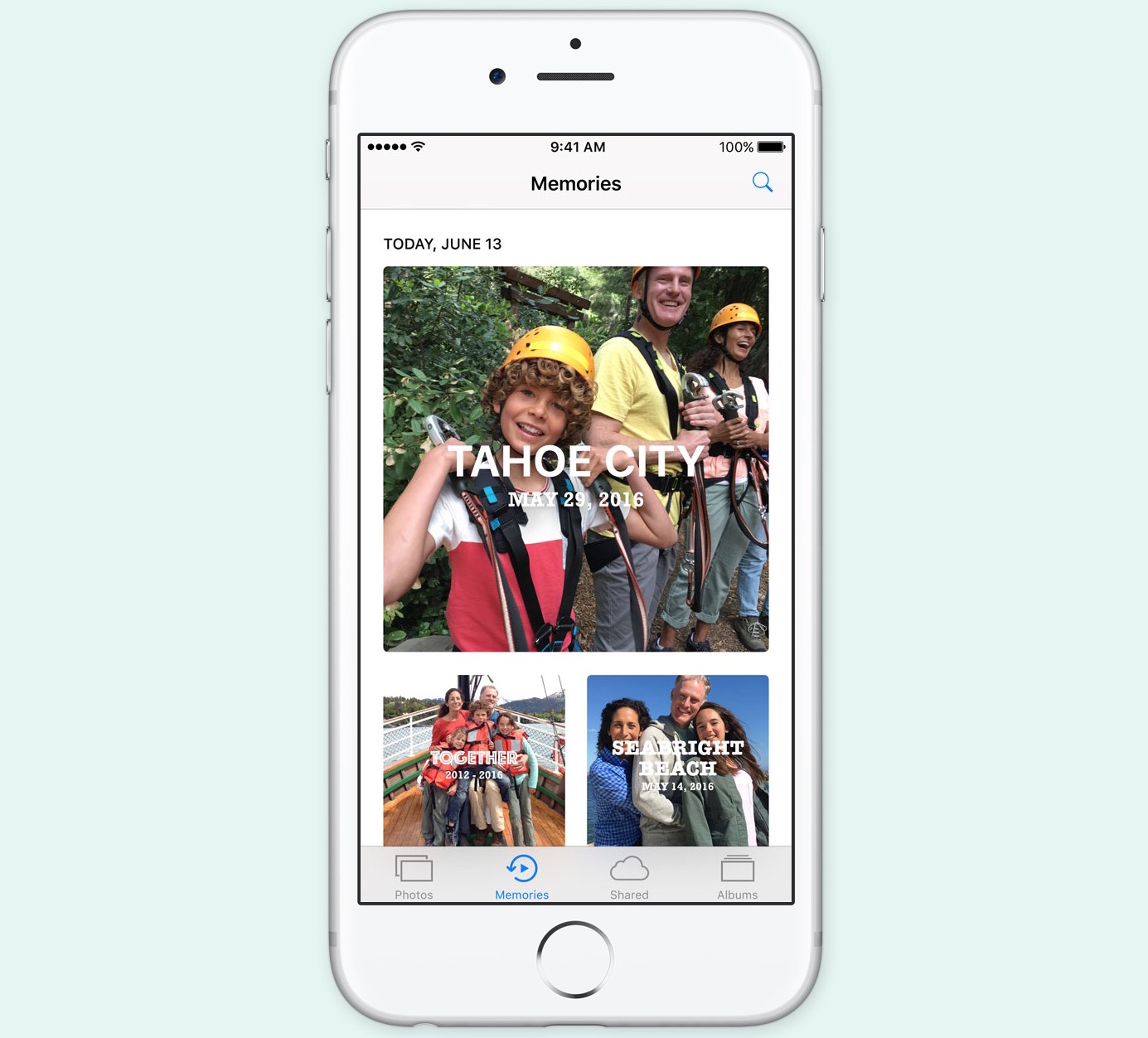

It recognizes faces and locations in your photos. It figures out whether you would be better off leaving a weak Wi-Fi signal and switching to the cell network. It even knows what good filmmaking is, enabling Apple to quickly compile your snapshots and videos into a mini-movie at a touch of a button.

If you were to argue that Apple’s competitors do many similar things, you’d be right.

Clockwise, from upper left: Tom Gruber, Siri’s Advanced Development Head; Eddy Cue, Apple’s SVP of Internet Software and Services; and Alex Acero, Siri’s Senior Director.

But, you must keep in mind that Apple’s way of providing these features also protects your privacy. In using the power of in-house designed A-series chips that power iOS devices and Differential Privacy techniques, Apple’s software is able to pull those things off directly on the device, without dumping significant user data on its servers to let the cloud do the heavy-lifting.

RELATED: A closer look at Differential Privacy on iOS 10 and macOS Sierra

In a rare revelation, Apple told the journalist that the dynamic cache which enables machine learning on the iPhone takes up about 200 megabytes of storage space.

The system deletes older data, but the actual size of the cache depends on how much personal data is used, stuff like information about app usage, interactions with other people, neural net processing, a speech modeler, natural language event modeling and data for the neural nets that power object recognition, face recognition and scene classification in the Photos app.

Here’s Apple’s marketing boss Phil Schiller:

Our devices are getting so much smarter at a quicker rate, especially with our Apple-designed A- series chips. The back ends are getting so much smarter, faster, and everything we do finds some reason to be connected. This enables more and more machine learning techniques, because there is so much stuff to learn, and it’s available to [us].

These techniques are also used to power some “new things we haven’t be able to do,” he added.

But it’s not just the silicon, says Federighi:

It’s how many microphones we put on the device, where we place the microphones. How we tune the hardware and those mics and the software stack that does the audio processing. It’s all of those pieces in concert. It’s an incredible advantage versus those who have to build some software and then just see what happens.

Apple isn’t just dipping its toes in Differential Privacy, it’s fully embracing it.

“It’s a technique that will ultimately be a very Apple way of doing things as it evolves inside Apple and in the ways we make products,” said Schiller.

Less visible AI-driven features

Artificial intelligence is also used to improve other aspects of the iOS experience.

Here are some of the examples of AI-driven features:

- Identifying who a caller not in your Contacts, based on their emails

- Populating the iOS app switcher with a shortlist of the apps you’re most likely to open next

- Getting reminders from appointments based on emails

- Surfacing a Maps location for the hotel you’ve reserved before you type it in

- Remembering where you parked your car

- Palm rejection for Apple Pencil

- Recognizing ‘Hey Siri’ hot word in a power-friendly way

- Improving QuickType keyboard suggestions

And, of course, lots more.

Software boss Craig Federighi listens to Siri’s speech guru Alex Acero discussing the voice recognition software at Apple headquarters. Oh, how I’d like to be a fly on that wall!

“Machine learning is enabling us to say yes to some things that in past years we would have said no to,” says marketing honcho Phil Schiller. “It’s becoming embedded in the process of deciding the products we’re going to do next,” says Schiller, adding:

The typical customer is going to experience deep learning on a day-to-day level that [exemplifies] what you love about an Apple product. The most exciting [instances] are so subtle that you don’t even think about it until the the third time you see it, and then you stop and say, “How is this happening?”

Thanks to the article, I also learned that some of the AI code that recognizes hand-scrawled Chinese characters into text on iOS or power the new letter-by-letter Apple Watch input on watchOS 3 actually draws from Apple’s work on handwriting recognition for its failed Newton message pad in the early 1990s.

The piece provides additional insights into how Apple approaches artificial intelligence and machine learning work versus its competitors. It’s a fascinating article and you’ll wholeheartedly recommended to give it a read.

Source: Backchannel