Apple has reportedly purchased Emotient, Inc., a privately held San Diego-based artificial intelligence startup, according to Dow Jones. No price was given for the deal. Emotient, according to its website, is the leader in emotion detection and sentiment analysis.

The company’s products are used for gauging emotional response by way of measuring facial expressions, allowing it to basically read people’s emotions from live video.

An Apple spokeswoman has confirmed the purchase to The Wall Street Journal with a standard boilerplate response, saying that “we buy smaller technology companies from time to time, and generally do not discuss our purpose or plans.”

“Emotient’s flagship service, Emotient Analytics, analyzes videos of customers as they experience marketing, products and services,” as per to the company’s LinkedIn profile.

“It provides complete and accurate facial expression detection and frame-by-frame measurement of nine key emotions, as well as attention, engagement and positive or negative consumer sentiment.”

The most obvious implementation of Emotient technology in iOS would entail automatic image sorting by emotion in the stock Photos app, as suggested by a technology showcase video embedded below.

https://vimeo.com/67741811

Other possible applications could include gauging customer response to products in Apple Stores, analyzing driver fatigue to prevent accidents (hello, iCar!), providing dynamic responses in games based on facial expressions and more.

“We brought students into the lab to drive in the driving simulator and were able to predict when people were within 60 seconds of a crash in the simulator,” said Dr. Marian Bartlett, one of Emotient’s founders who is also a research professor at UC San Diego.

https://vimeo.com/70210615

Emotient’s technology was primarily sold to advertisers to help assess viewer reactions to their ads and was also used to measure the expressions of the ten candidates on stage during the first Republican Presidential Primary Debate aired on FOX News.

This was the first time emotion-reading technology has been used to analyze a Presidential Primary Debate. Here’s a video of Emotient’s analytics in action.

https://vimeo.com/122238992

“Wherever there are cameras there can be video analysis of expressions, and an opportunity to learn about the customer’s state of mind as they emotionally respond to marketing, product and service experiences,” according to Emotient’s website, which was recently revised to remove details about its products and services.

The company’s technology also comes with the ability to detect the emotions of hundreds of people at once in real-world conditions. Here’s an example crowd analytics in NBA, powered by Emotient.

https://vimeo.com/130470241

At a Golden State Warriors game, Emotient technology used an $800 1080p camera, which recorded from 300 feet across the stadium, to distinguish up to 100 faces. A 4K camera could have captured closer to 400, as Emotient CEO Ken Denman told Re/code.

And here’s how Emotient technology improves ad testing with the Emotient AdPanel, a service they developed for testing ad effectiveness.

https://vimeo.com/121210467

Among Emotient’s directors is Seth Neiman, an American computer industry businessperson, venture capitalist and a professional racing driver.

Back in March of 2014, the startup announced its Series B financing of $6 million. Intel Capital, Emotient’s first institutional investor and a customer, also participated in the financing round, which closed in December 2013.

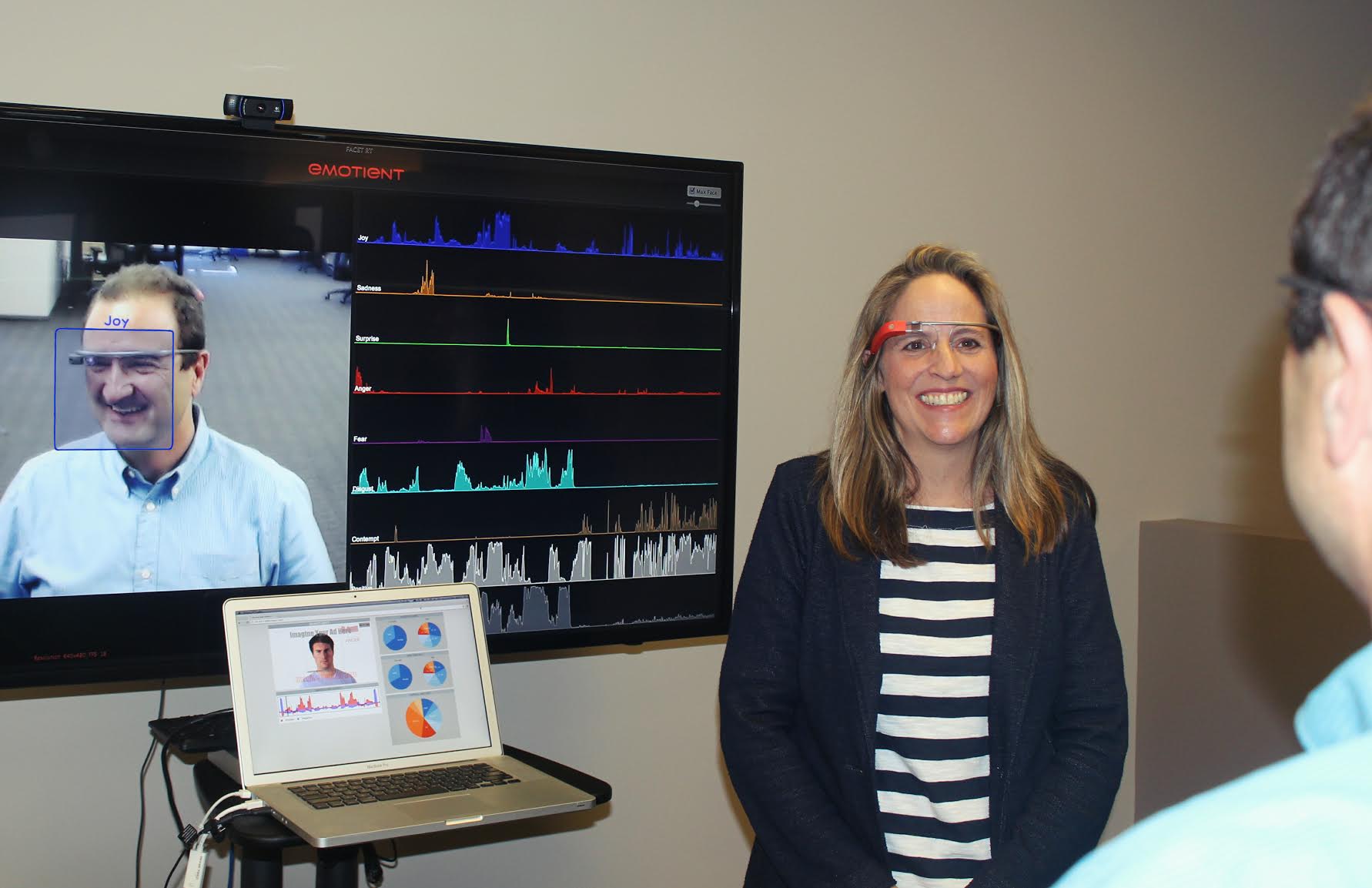

Emotient’s face-tracking technology was also used in an app for Google Glass to demonstrate identifying the mood, emotions and sentiments of everyone around a Glass user, displaying them in their line-of-sight.

It successfully measured overall sentiment ranging from positive to negative to neutral, primary emotions such as joy, surprise, sadness, fear, disgust, contempt and anger, and more advanced emotions like frustration and confusion.

According to The Next Web which took the app for a spin, it doesn’t require any special hardware and instead uses an ordinary Logitech web camera, which makes it potentially suitable for the iPhone.

In September 2015, Emotient was issued a patent to make emotion analytics anonymous and another one to automate facial expression analytics.

Source: CNBC