Apple has been researching bringing light field panoramic imaging to the iPhone camera, a feature that would let you immerse yourself while exploring captured scenes with parallax, clear object separation and other visual effects in virtual or augmented reality.

STORY HIGHLIGHTS:

- Apple has won a patent for light field imaging on iPhone and iPad

- The invention replicates functionality provided by plenoptic cameras

- Such images would come to life in virtual or augmented reality

Apple researching light field imaging on iPhone and iPad

Plenoptic cameras like the Lytro achieve this by employing an array of micro-lenses placed in front of an image sensor to detect color, intensity and directional information. In smartphones, space is at a premium so Apple came up with a solution that doesn’t require a lens array.

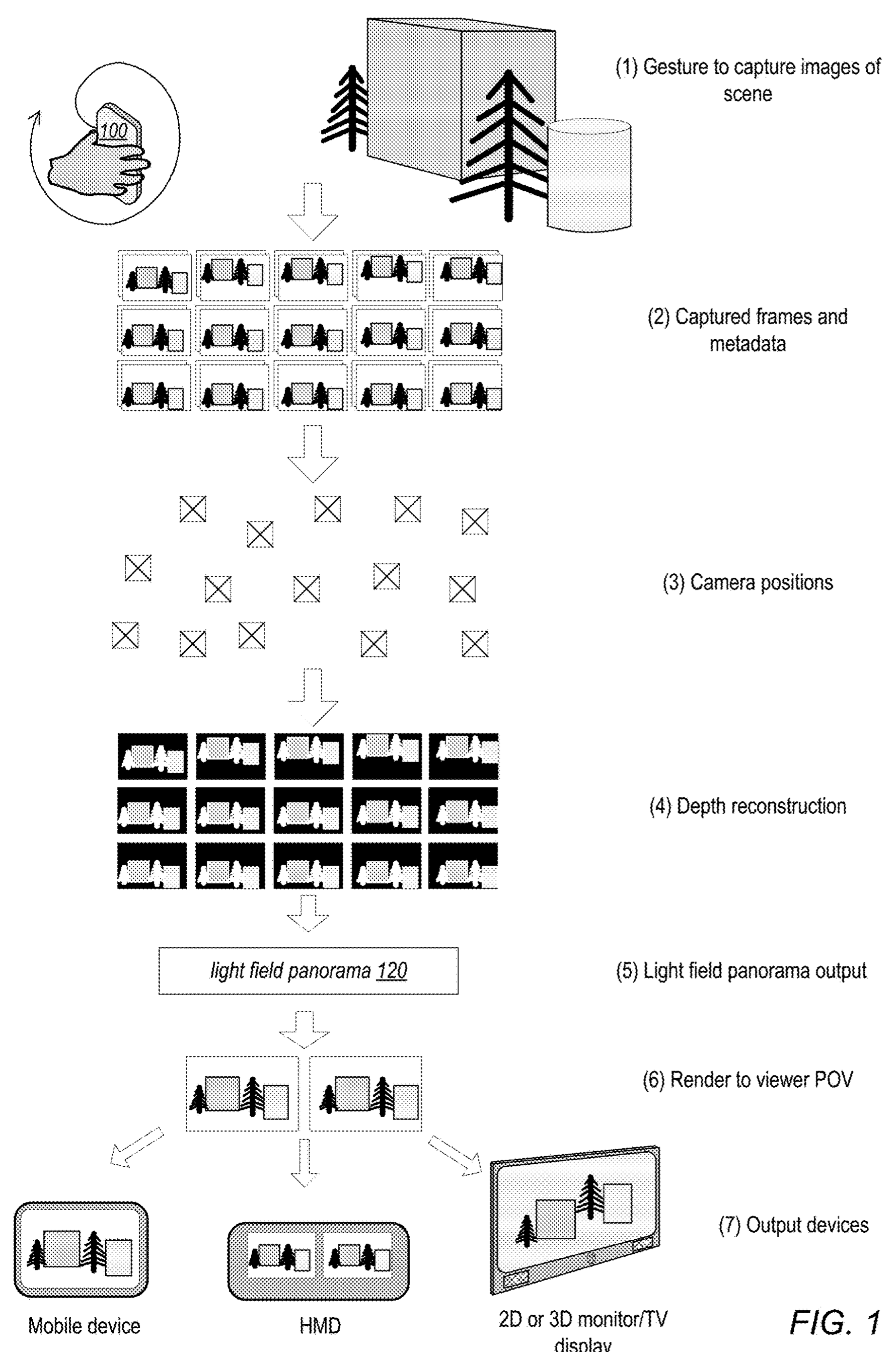

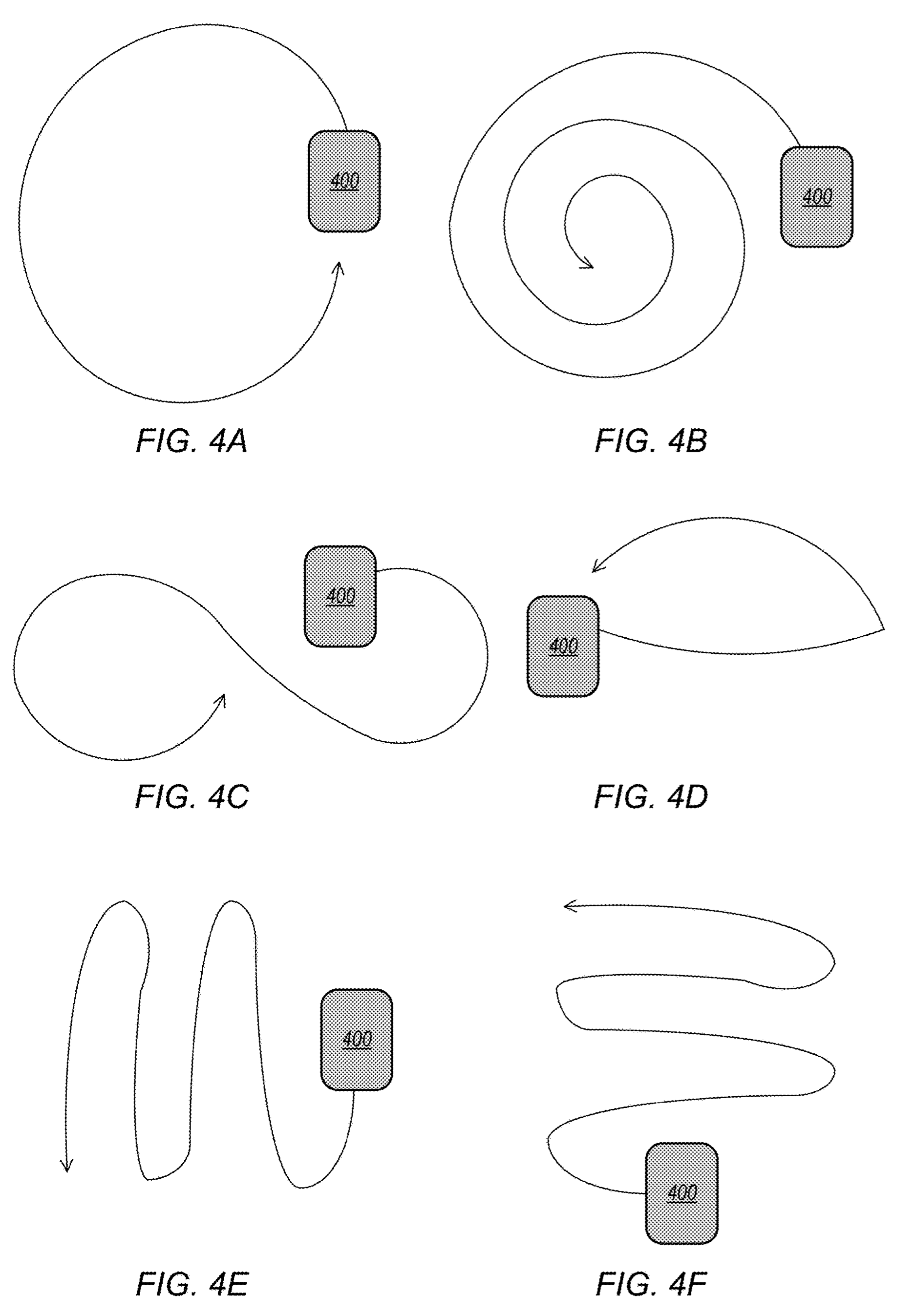

In a newly granted patent titled “Panoramic light field capture, processing and display,” the iPhone maker envisions using hand gestures to prompt the iPhone camera to capture a scene from different positions. One of the illustrated gestures involves moving an iPhone or iPad in a swooping infinity symbol to capture a scene from multiple angles.

By contrast, regular panoramas you can capture on your iPhone right now involve panning the device from left to right or top to bottom in order to capture a sequence of images of a scene, later stitched together to form a wide-angle photograph. In light field photography, a device captures both color and the direction that the light rays are traveling in space.

Information from onboard sensors like camera position and device orientation is also captured. Apple’s algorithm would then store the individual images, their relative positions and depth information as a light field panorama that could later be interacted with in several ways.

Capturing light field images would entail perks not limited to just selective depth of field (which the iPhone camera does already) or determining the direction of the light rays.

Exploring light field images in AR and VR

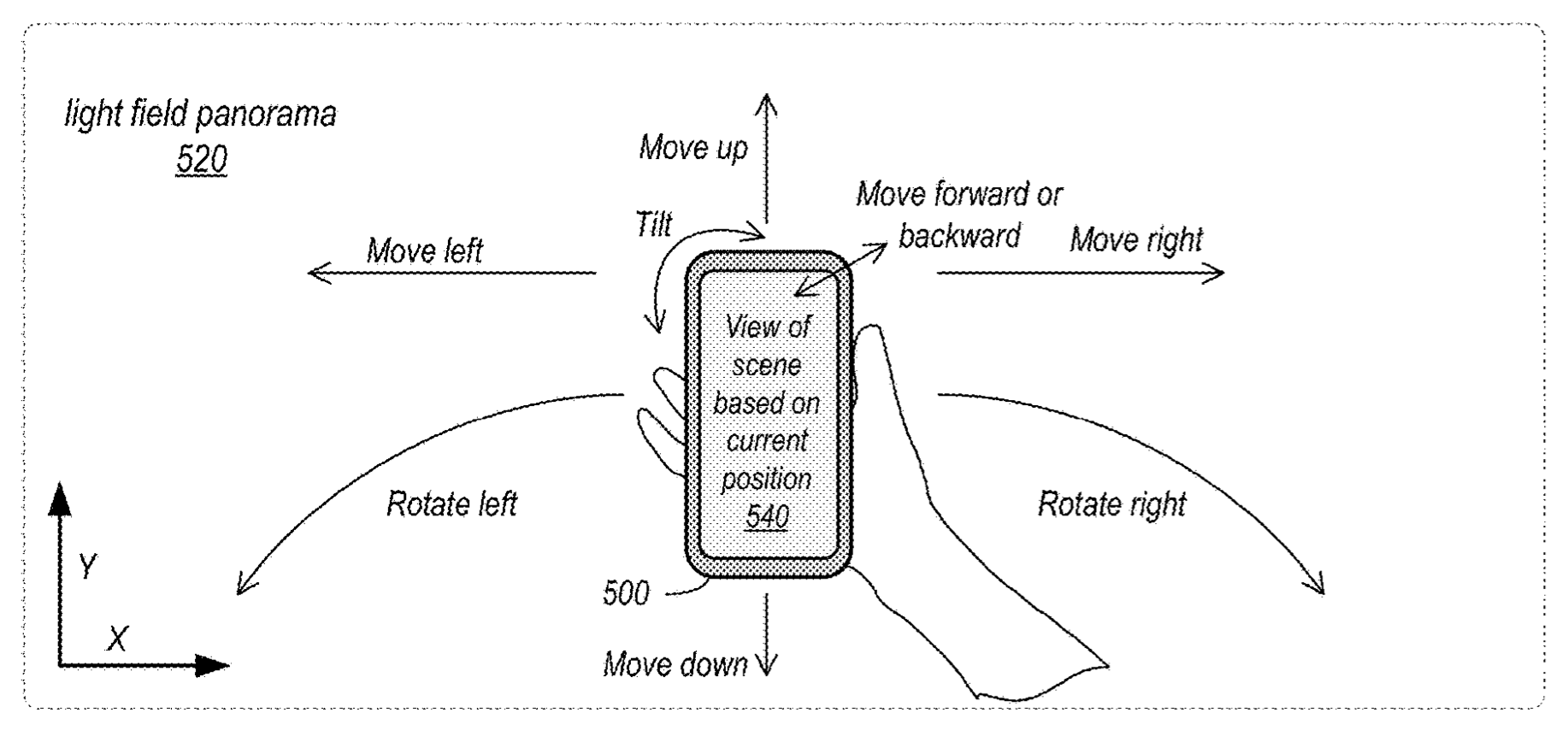

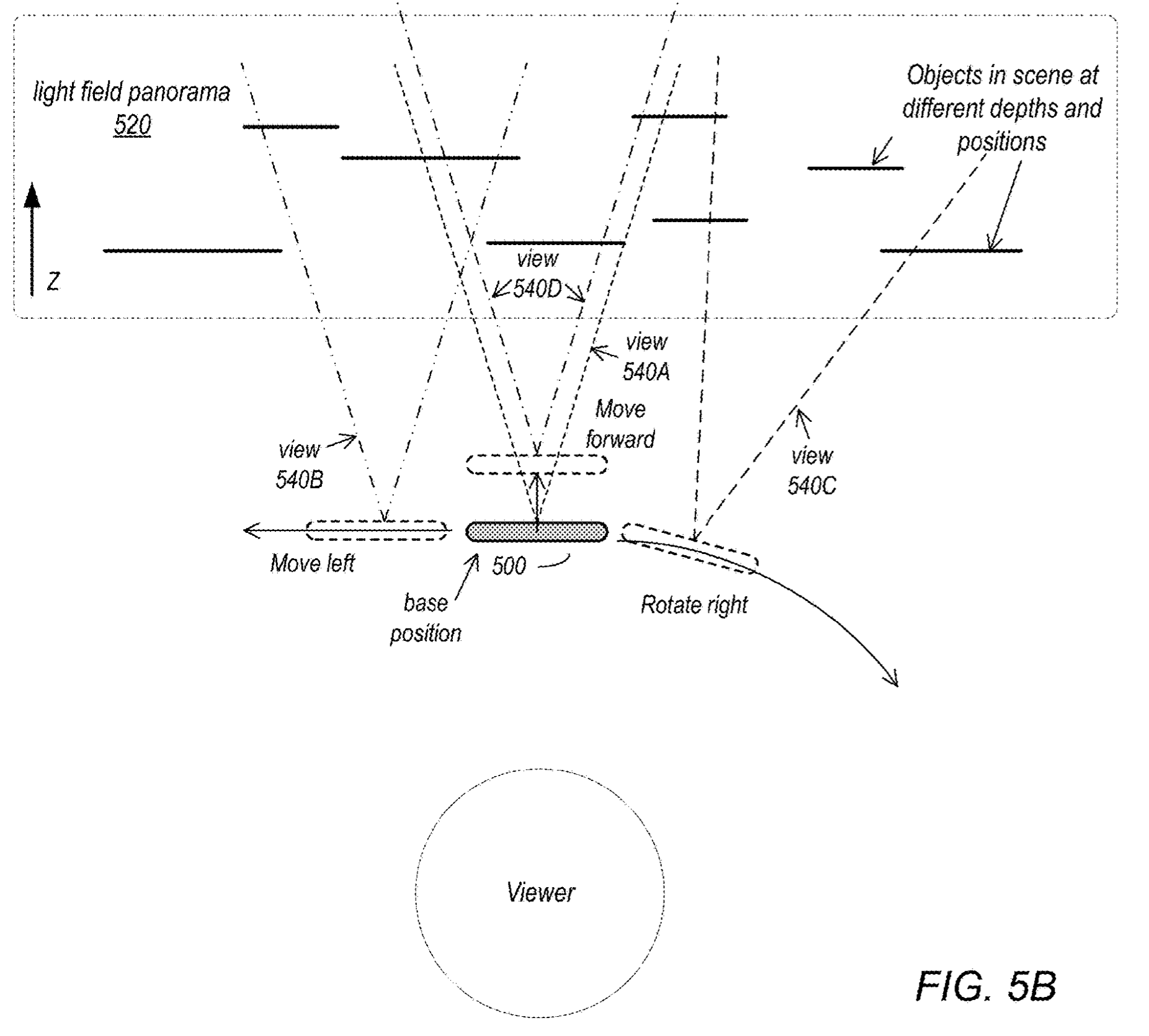

In one embodiment, a user gets to cycle through different 3D views of the scene. Because the system is aware of the relative positions of the individual images and objects, it could let a user explore the scene from different positions and angles with six degrees of freedom.

You can see some of the more practical applications of the emerging light field imaging technology on a video published in the SIGGRAPH 2020 technical papers.

When experienced on a head-mounted display (Apple is said to be working on such things), a viewer may see behind or over objects in the scene, zoom in or out on the scene or view different parts of the scene, Apple explains. Throw in a parallax effect to the mix and you get a very immersive augmented reality experience based on your own light field panoramic images.

As Apple writes in the granted patent documents:

The image that is captured is parallax-aware in that when the image is rendered in virtual reality, objects in the scene will move properly according to their position in the world and the viewer’s relative position to them. In addition, the image content appears photographically realistic compared to renderings of computer generated content that are typically viewed in virtual reality systems.

A viewer would also be able to translate in different directions and rotate with the content, another great feature when exploring these things in virtual or augmented reality.

→ iPhone photography tips everyone should know

Apple says existing 360-degree imaging technologies like photosphere only allow three degrees of freedom which limits the experience to head rotation. Photospheres don’t support translating the user through the content as they can when exploring the light field panorama.

To learn more about this patent, visit the USPTO website.