iOS 11 includes more natural and expressive male and female synthetic voices for Siri that use the latest advancements in machine learning and artificial intelligence to adjust intonation, pitch, emphasis and tempo while speaking.

Audio snippets at the bottom of a post on Apple’s Machine Learning blog highlight how Siri’s voice has changed with each new major iOS release, from iOS 9, 10 and 11. Our own Andrew O’Hara has created a little video that lets you hear how Siri’s voice has evolved over the years.

Subscribe to iDownloadBlog on YouTube

Apple has definitely improved Siri’s voice for the past several years.

The new voice inflections in iOS 11 sound really nice, but there are instances when it emphasizes the wrong words of somewhat common phrases.

Here’s an excerpt from Apple’s machine learning blog:

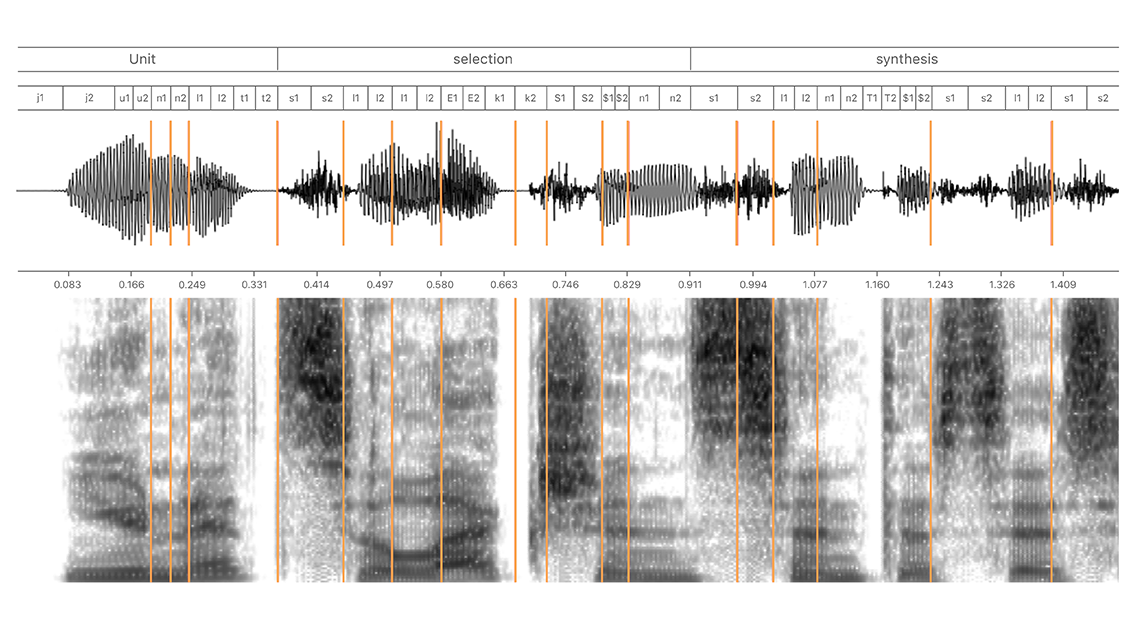

For iOS 11, we chose a new female voice talent with the goal of improving the naturalness, personality, and expressivity of Siri’s voice. We evaluated hundreds of candidates before choosing the best one. Then, we recorded over 20 hours of speech and built a new TTS voice using the new deep learning based TTS technology. As a result, the new US English Siri voice sounds better than ever.

iOS 9 and iOS 10 both marked a good improvement for Siri’s voice, but iOS 11 was a huge step forward with better stringing of sentences and inflections, wouldn’t you say so?