Learn how to insert emojis into your text via voice using Dictation or Siri so you can be more expressive without having to switch to the emoji pane of the on-screen keyboard.

How to insert emoji using Siri or Dictation on iPhone

Learn how to insert emojis into your text via voice using Dictation or Siri so you can be more expressive without having to switch to the emoji pane of the on-screen keyboard.

Check out the solutions to fix dictation issues on your iPhone, iPad, Apple Watch, and Mac.

Apple's just-refreshed MacBook Air with the new Apple M1 laptop chip that was announced yesterday features an updated media key layout in the function row, with the new shortcuts for Dictation, Spotlight and Do Not Disturb functions replacing the previous keys for adjusting brightness and invoking the Launchpad feature. Also, there's now a dedicated Emoji key.

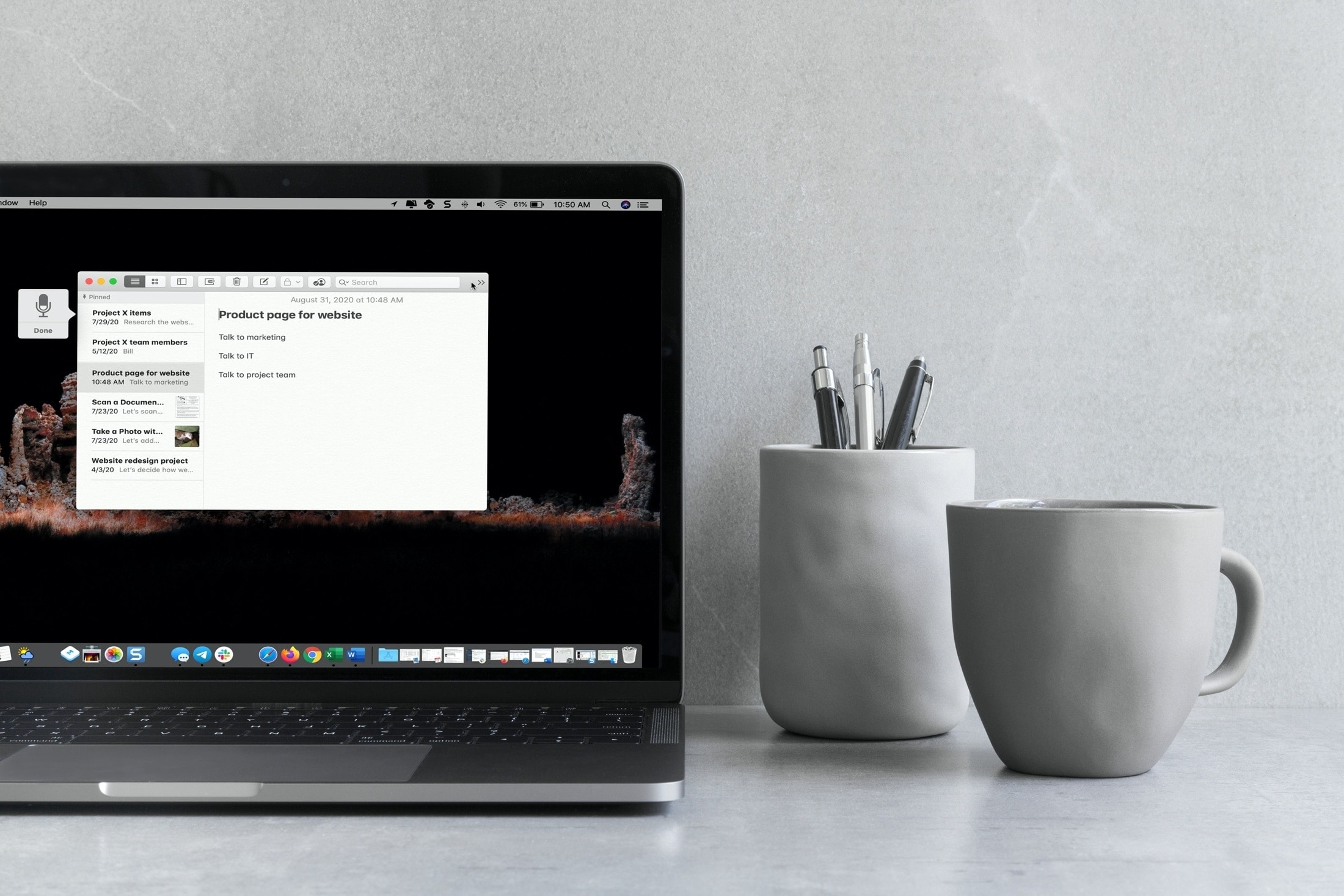

Learn how to use keyboard dictation on your Mac with our quick and easy guide, enhancing your productivity through hands-free typing.

Learn how to use Voice Control on your Mac to navigate and interact with your computer using just your voice. This is handy if you cannot use traditional input methods, or want an additional way to get things done.

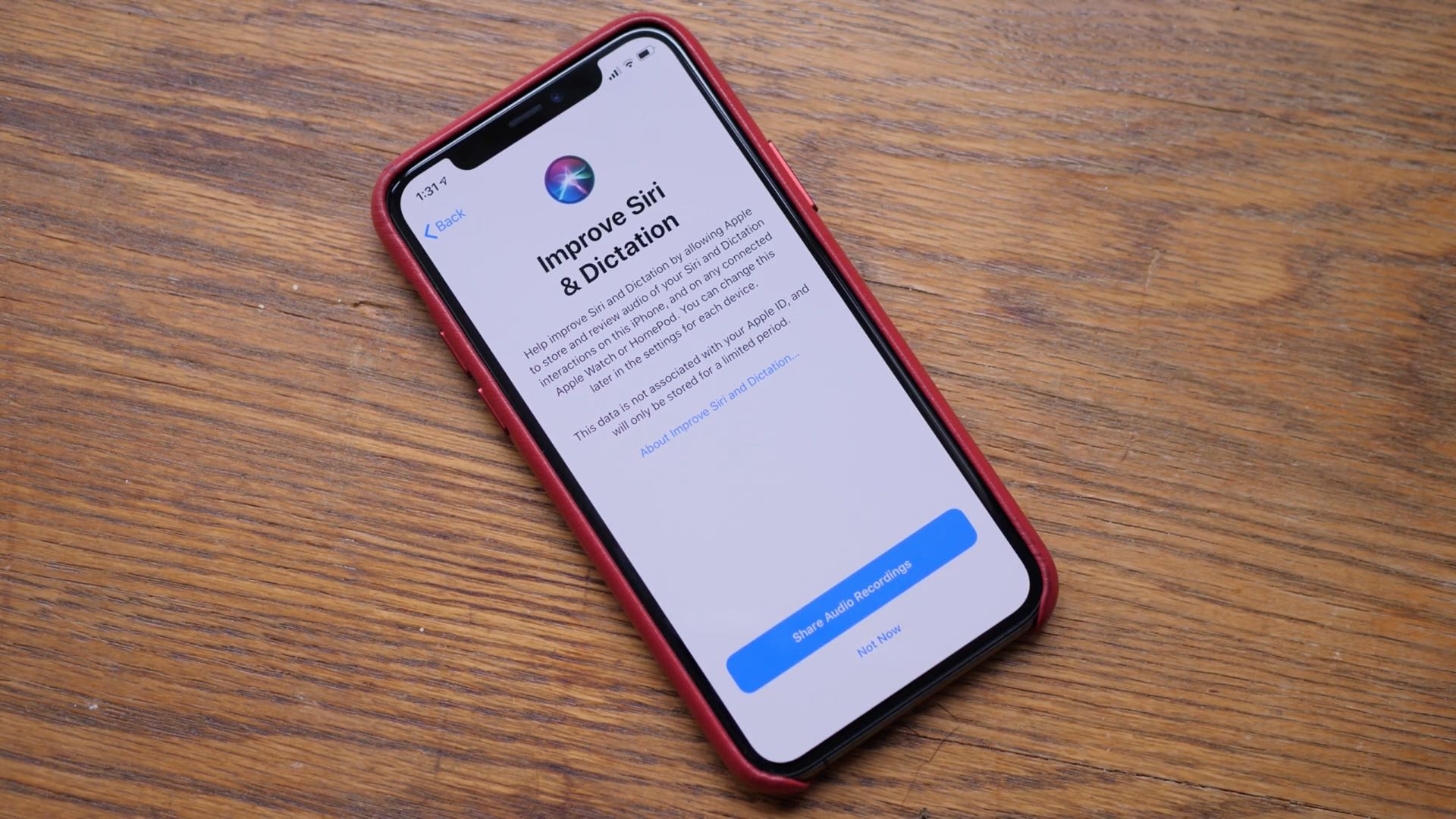

Learn how to opt out of Siri grading and delete your Siri history and data associated with your iPhone, iPad, Mac, Apple TV, Apple Watch, and HomePod from Apple’s servers.

Apple yesterday released the iOS 13.2 software update for iPhone, iPad and iPod touch. With it came a new computational photography feature for the latest iPhone 11 devices, dubbed Deep Fusion, in addition to Announce Messages With Siri that was originally supposed to arrive as part of the initial iOS 13.0 update, plus a whole bunch of other important changes.

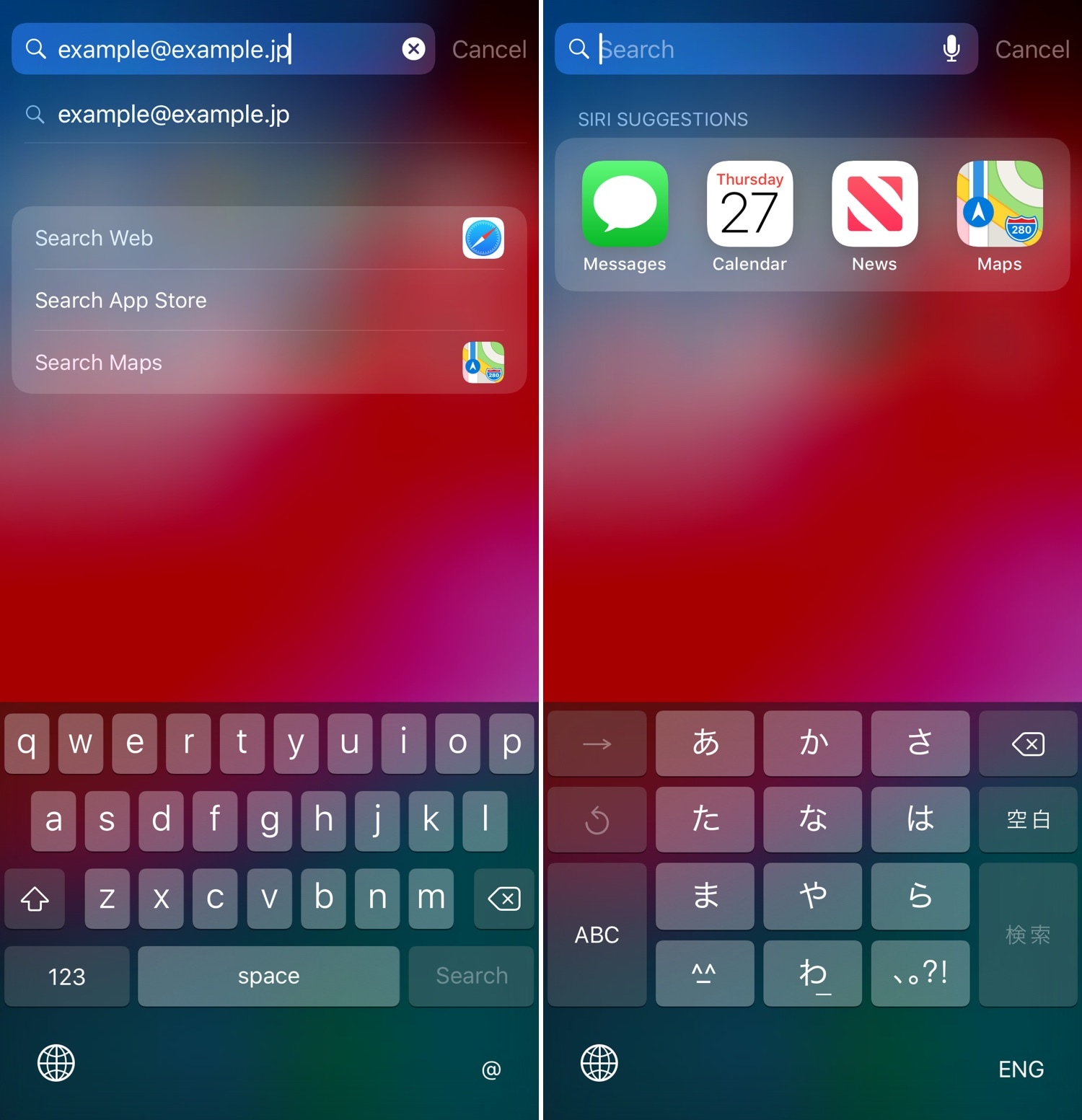

Some people use iOS’ Dictation feature more than others, but if you find yourself on the opposite side of the spectrum and not using it as much as you’d initially anticipated, then you just might benefit from a newly-released jailbreak tweak called EasyKeyboardX by iOS developer Soh Satoh.

EasyKeyboardX essentially replaces the Dictation key on the iOS keyboard with a configurable shortcut key instead. With it, you can either use the button to switch between your enabled keyboards more quickly, or you can enter a specific text string that you’d like to be able to paste into any text field in a jiffy.

Just how exactly does Siri learn a new language? In today's interview with Reuters, Apple's speech team head Alex Acero offered a behind-the-scenes look at how Siri is being taught new languages, a process that involves script-writing, capturing voices in multiple accents and dialects and using machine learning and artificial intelligence to build and evolve new language models over time. The system requires a team of people tasked with reading passages of manually transcribed text.

Before actually updating Siri, Apple first rolls out Dictation support for a new language.

Siri currently speaks 21 languages in 36 countries. By comparison, Microsoft's Cortana supports eight languages tailored for thirteen countries, Google Assistant speaks four languages while Amazon's Alexa works only in English and German.

Call me crazy or call me what you will, but when I saw Android Wear 2.0 was bringing support for third-party keyboards, I immediately started imagining how useful that would be on my Apple Watch.

Of course the screen is too small to accommodate a keyboard. Heck, it’s already too small to punch in your passcode without missing a tap target. Still, not only do I think there may me a need for it, but I also believe the technology to make this right is now available.

tvOS 9.2, a new update for the operating system which powers the fourth-generation Apple TV, is now available for public consumption. The new firmware, released alongside iOS 9.3, OS X El Capitan 10.11.4 and watchOS 2.2, is a very interesting update for the cool new features it brings to the table.

tvOS 9.2 enables several features missing from the initial tvOS release, including long-awaited support for wireless keyboards, dictation, Siri support for App Store searches, app folders on the Home screen, a revamped app switcher, Siri Remote improvements, support for Live Photos and iCloud Photo Library and more.

Get your Mac to speak selected text back to you, turning your computer into a personal reader that can help you absorb information more effectively, and even assist if you have visual impairments.