Apple’s engineers explain in a new interview how they’ve leveraged all the power afforded by the latest Apple chips to create the new cinematic shooting mode on iPhone 13 that wouldn’t sacrifice live preview — and all that with frame-by-frame Dolby Vision HDR color grading.

STORY HIGHLIGHTS:

- Cinematic Mode wouldn’t be possible without the new A15 chip

- It uses Neural engine to identify people and perform other AI tricks

- Plus, each frame undergoes Dolby Vision color grading

- All that runs in real-time, with live preview in the Camera app

Apple shares what it took to create iPhone 13’s Cinematic Mode

Kaiann Drance, Apple’s Vice President of Worldwide iPhone Product Marketing, along with Johnnie Manzari, who is a designer on the company’s Human Interface Team, sat down with TechCrunch‘s Matthew Panzarino to discuss the technology behind Cinematic Mode, the headline new feature available across all the new iPhone 13 models.

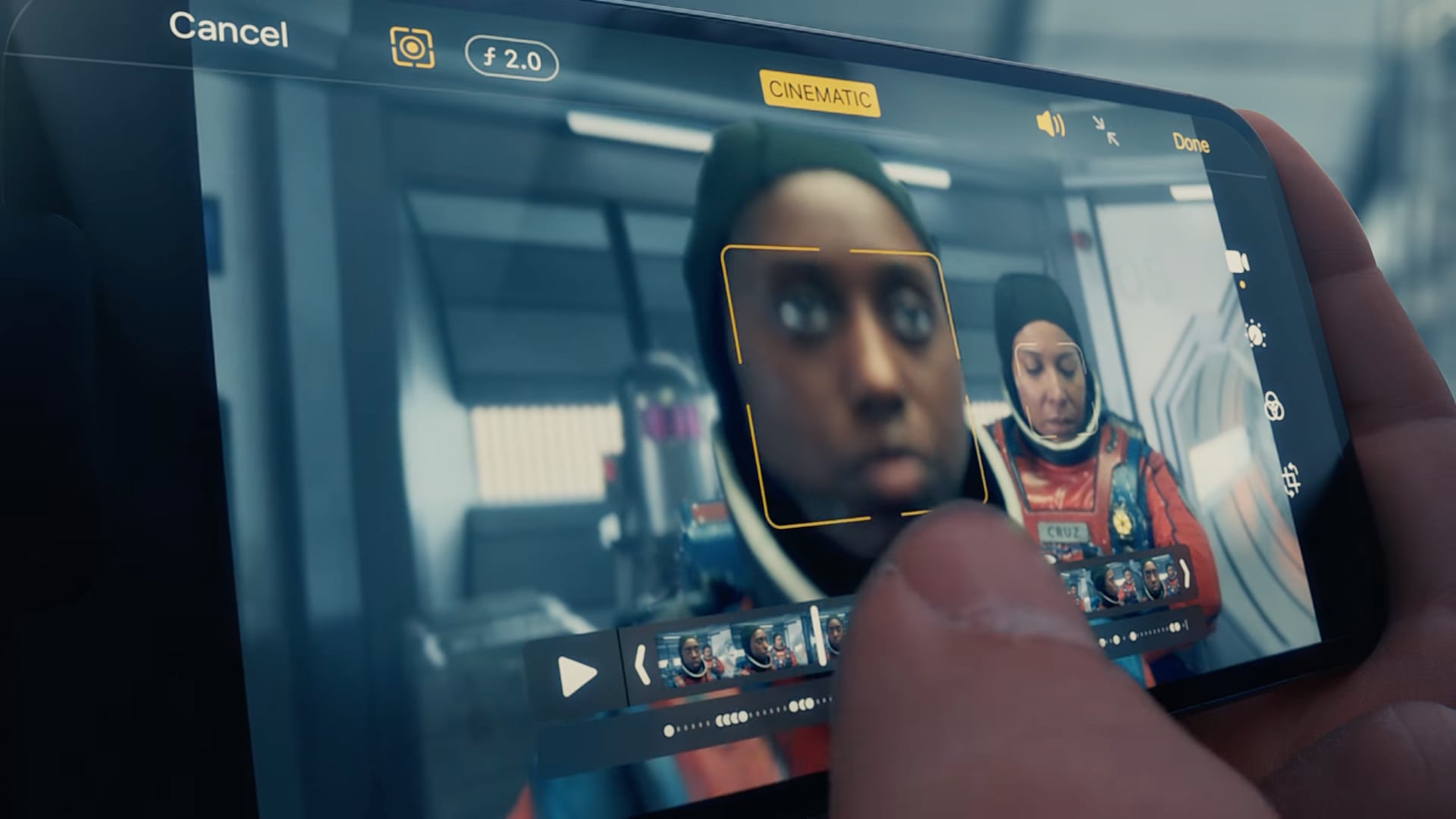

Cinematic Mode has been described as Portrait mode for video. With gaze detection, Cinematic Mode automatically focuses on subjects as they interact with the camera during the shoot. You can also take manual control of the depth-of-field effect while you shoot, and even change the depth-of-field effect afterward in the Photos app.

To retain the ability to adjust the bokeh in post-production, every frame of the video shot with Cinematic Mode comes with its own depth map.

We knew that bringing a high-quality depth of field to video would be magnitudes more challenging [than Portrait Mode]. Unlike photos, video is designed to move as the person filming, including hand shake. And that meant we would need even higher-quality depth data so Cinematic Mode could work across subjects, people, pets and objects, and we needed that depth data continuously to keep up with every frame. Rendering these autofocus changes in real time is a heavy computational workload.

The team took advantage of all the power of Apple’s new A15 Bionic chip, along with the company’s machine learning accelerator dubbed Neural engine, to be able to encode Cinematic Mode video in Dolby Vision HDR. Another thing that was a priority for the team is live preview of Cinematic Mode in the viewfinder.

Apple’s teams spoke with some of the best cinematographers and camera operators in the world. Also, they went to movies to see examples of films through time. It became apparent to them that focus and focus changes “were fundamental storytelling tools, and that we as a cross-functional team needed to understand precisely how and when they were used.”

It was also just really inspiring to be able to talk to cinematographers about why they use shallow depth of field. And what purpose it serves in the storytelling. And the thing that we walked away with is, and this is actually a quite timeless insight: You need to guide the viewer’s attention.

In explaining why precision is key, Manzari noted that Apple has learned during the development of the Portrait Mode feature that simulating bokeh is really hard. “A single mistake — being off by a few inches…this was something we learned from Portrait Mode,” he said. “If you’re on the ear and you’re not on their eyes. It’s throwaway.”

Cinematic Mode uses data from sensors like the accelerometer, too:

Even while you’re shooting, the power is evident as the live preview gives you a pretty damn accurate view of what you’re going to see. And while you shoot, the iPhone is using signals from your accelerometer to predict whether you’re moving toward or away from the subject that it has locked onto so that it can quickly adjust focus for you.

And this about gaze detection:

At the same time it is using the power of “gaze.” This gaze detection can predict which subject you might want to move to next and if one person in your scene looks at another or at an object in the field, the system can automatically rack focus to that subject.

And because the camera overscans the scene, it can do subject prediction, too:

A focus puller doesn’t wait for the subject to be fully framed before doing the rack, they’re anticipating and they’ve started the rack, before the person’s even there. And we realize that by running the full sensor we can anticipate that motion. And, by the time the person has shown up, it’s already focused on them.

We’ll report more about Cinematic Mode when we have a chance to play with it ourselves.

The problem(s) with Cinematic Mode

As a resource-intensive feature, Cinematic Mode isn’t without its share of teething issues.

Just like the early days of Portrait mode brought us blurred hair and halo around subjects, we’re seeing similar problems with Cinematic Mode. And even with all the power of Apple silicon at its disposal, Cinematic Mode is limited to shooting in 1080p resolution at 30fps.

Joanna Stern of The Wall Street Journal is the only major technology reviewer who has almost exclusively focused (pun intended) on Cinematic Mode in her iPhone 13 Pro review. All in all, she doesn’t think Cinematic Mode is ready for prime time, yet.

With videos, gosh, I was really excited about the new Cinematic mode. Aaaand gosh, was it a let down. The feature — which you could call “Portrait mode for video” — adds artistic blur around the object in focus. The coolest thing is that you can tap to refocus while you shoot (and even do it afterward in the Photos app).

Except, as you can see in my video, the software struggles to know where objects begin and end. It’s a lot like the early days of Portrait Mode, but it’s worse because now the blur moves and warps. I shot footage where the software lost parts of noses and fingers, and struggled with items such as a phone or camera.

To see the true potential of this features, be sure to watch two music videos that videographer Jonathan Morisson shot in Cinematic Mode with his iPhone 13 Pro camera, without using additional equipment such as gimbals. Give it a few years, and we’ll be shooting depth-of-field videos with our iPhones in 4K Dolby Vision HDR at sixty frames per second.