A few days ago, Apple supplier Qualcomm announced a second-generation Spectra image signal processor and a brand new line of high-resolution 3D depth-sensing camera modules that it said were specifically designed for the Android ecosystem.

The technology will be embedded in the new Snapdragon chips. According to DigiTimes this morning, Qualcomm’s 3D depth-sensing technology will be mainly used for facial recognition.

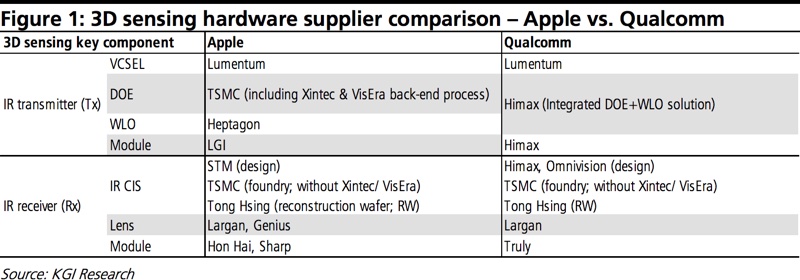

The company is working closely with Apple suppliers TSMC and Himax Technologies on kicking off volume production of the new 3D depth-sensing modules as early as the end of 2017, meaning first Android devices with those features could appear in 2018.

Qualcomm’s solution uses a 2-in-1 diffractive optical element and wafer-level optical system from Himax, which is also among the component suppliers for Apple’s 3D-sensing technology.

Check out how Qualcomm’s technology works in a video embedded below.

In addition, Qualcomm’s ultrasonic fingerprint scanner technology for in-screen fingerprint readers will appear in major smartphones from the likes of Huawei, Oppo and Vivo, slated for launch at the end of 2017 or early 2018.

KGI Securities analyst Ming-Chi Kuo said yesterday he believed that iPhone 8’s 3D-sensing technology would be ahead of Qualcomm’s by about two years.

He predicted that significant shipments of Qualcomm-built 3D-sensing modules for Android phones won’t occur until at least fiscal year 2019 because of immature algorithms and “design and thermal issues” associated with a variety of hardware reference designs.

Here’s an excerpt from Kuo’s note obtained by MacRumors:

While Qualcomm has excelled in designing advanced application processors and baseband solutions, it lags behind in other crucial aspects of smartphone applications like dual cameras (many Android phones have instead adopted solutions used to simulate optical zoom from third-parties like Arcsoft) and ultrasonic fingerprint scanners (while a reference design’s been released, there’s no visibility on mass production).

So, even though Qualcomm is the most engaged company in the research and development of 3D-sensing for the Android camp, we are conservative as regards progress toward significant shipments and don’t see it happening until fiscal year 2019.

DigiTimes’ supply chain report has now crushed Kuo’s forecast overnight.

iPhone 8’s 3D camera uses time-of-flight to resolve distance based on the known speed of light. By spraying a point cloud of infrared dots (invisible to your eye) on an object or face and reading distortions in this field of dots, it gathers depth information.

In a nutshell, the technology measures the time-of-flight of a light signal between the camera and the subject for each point of the image. Qualcomm’s solution is based on a somewhat similar approach that uses so-called structured light, which enables real-time dense depth map generation and segmentation.

Apple’s own 3D sensor is almost certainly based on specialized hardware and know-how the Cupertino giant obtained by acquiring Kinect motion sensor maker PrimeSense.

The sensor should completely replace Touch ID and is so secure that Apple is expected to use it for authorizing Apple Pay payment transactions on iPhone 8. Furthermore, the sensor could even let users unlock their iPhone 8 in a fraction of a second, just by glancing at it, as the facial recognition feature is said to work from oblique angles and even in complete darkness.