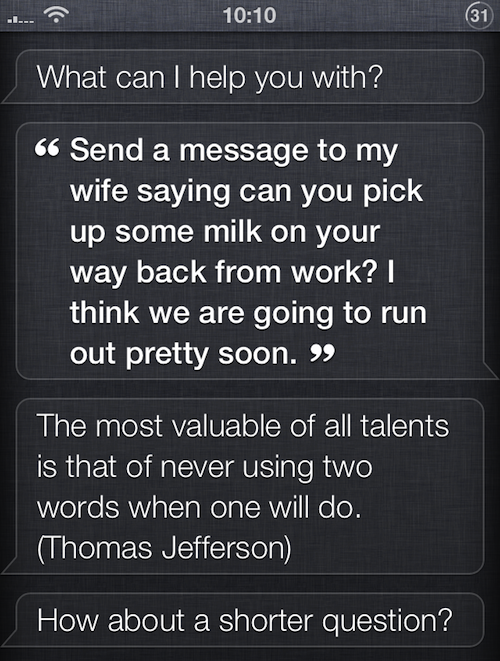

This is kind of interesting. A thread has popped up in the Reddit forums this week regarding some recent changes to Siri. It appears that Apple has quietly updated the digital assistant with a new, quirky attitude toward lengthy queries.

Now, when Siri is asked a question (or given a command) that is excessively long, she will quote famous passages from notable historical figures like William Strunk and Thomas Jefferson on the subject of—you guessed it—brevity…

Go ahead, give it a try. Ask Siri a question, or give her a command, longer than two sentences in length. She should respond with a snarky quote, similar to the one above from Thomas Jefferson. Here’s another new automated response:

“A sentence should contain no unnecessary words, a paragraph no unnecessary sentences, for the same reason that a drawing should have no unnecessary lines and a machine no unnecessary parts. (William Strunk, Jr.)”

There’s no word on when exactly the update occurred, but we found a discussion in Apple’s support forums that started back on May 4th. Users took to the forums to complain that the issue was preventing them from dictating lengthy messages.

So why did Apple decide to start truncating Siri queries? No one really knows. But the digital assistant has been having on and off troubles since it first launched back in the fall of 2011. Notably, it’s still marked as a beta product on Apple’s website.

Thanks Matt!