Learn how to use Google Lens, Microsoft Lens, and other OCR apps on Android to recognize and copy text from photos like on iPhone.

How to copy text from an image on Android phone like you can do on iPhone

Learn how to use Google Lens, Microsoft Lens, and other OCR apps on Android to recognize and copy text from photos like on iPhone.

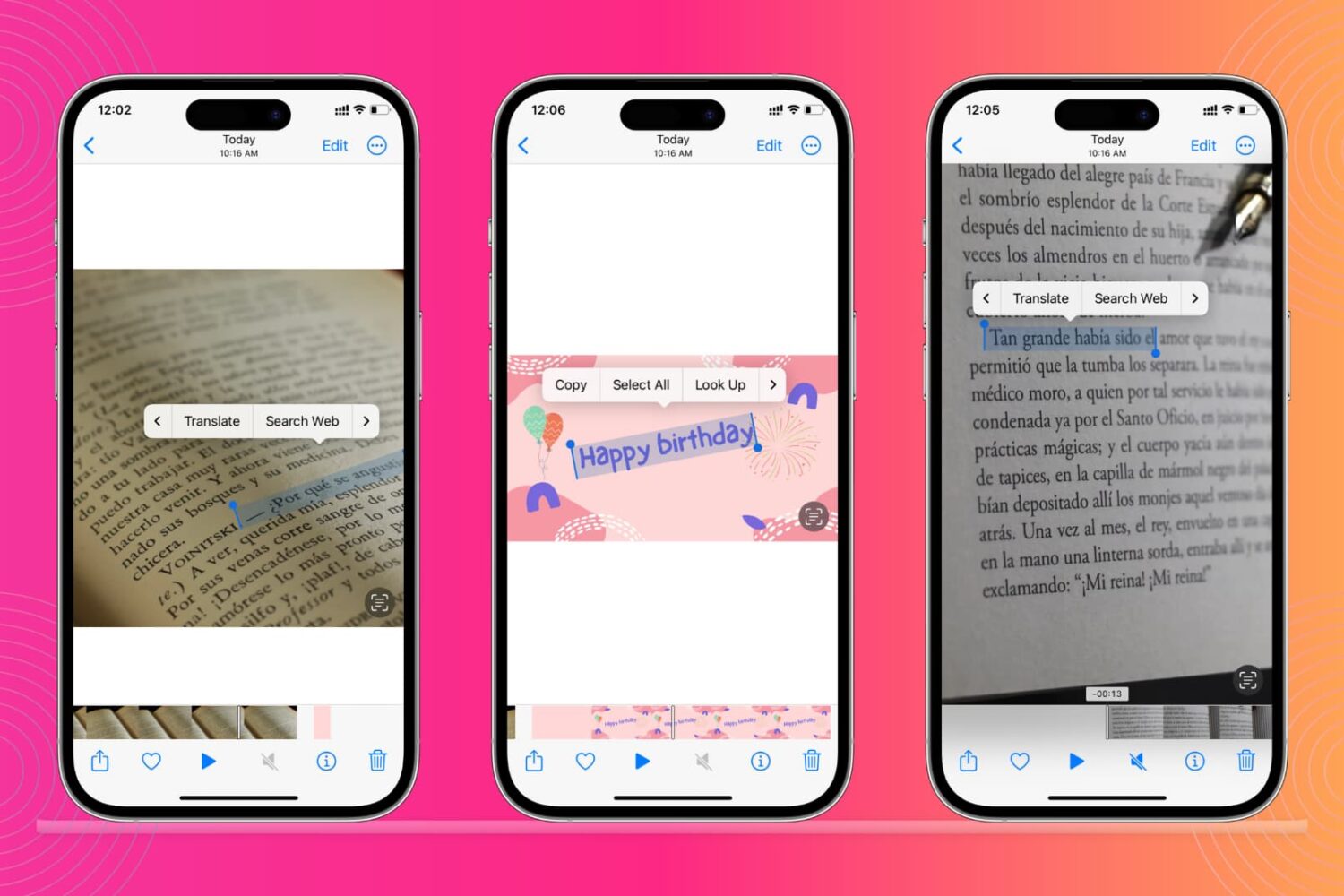

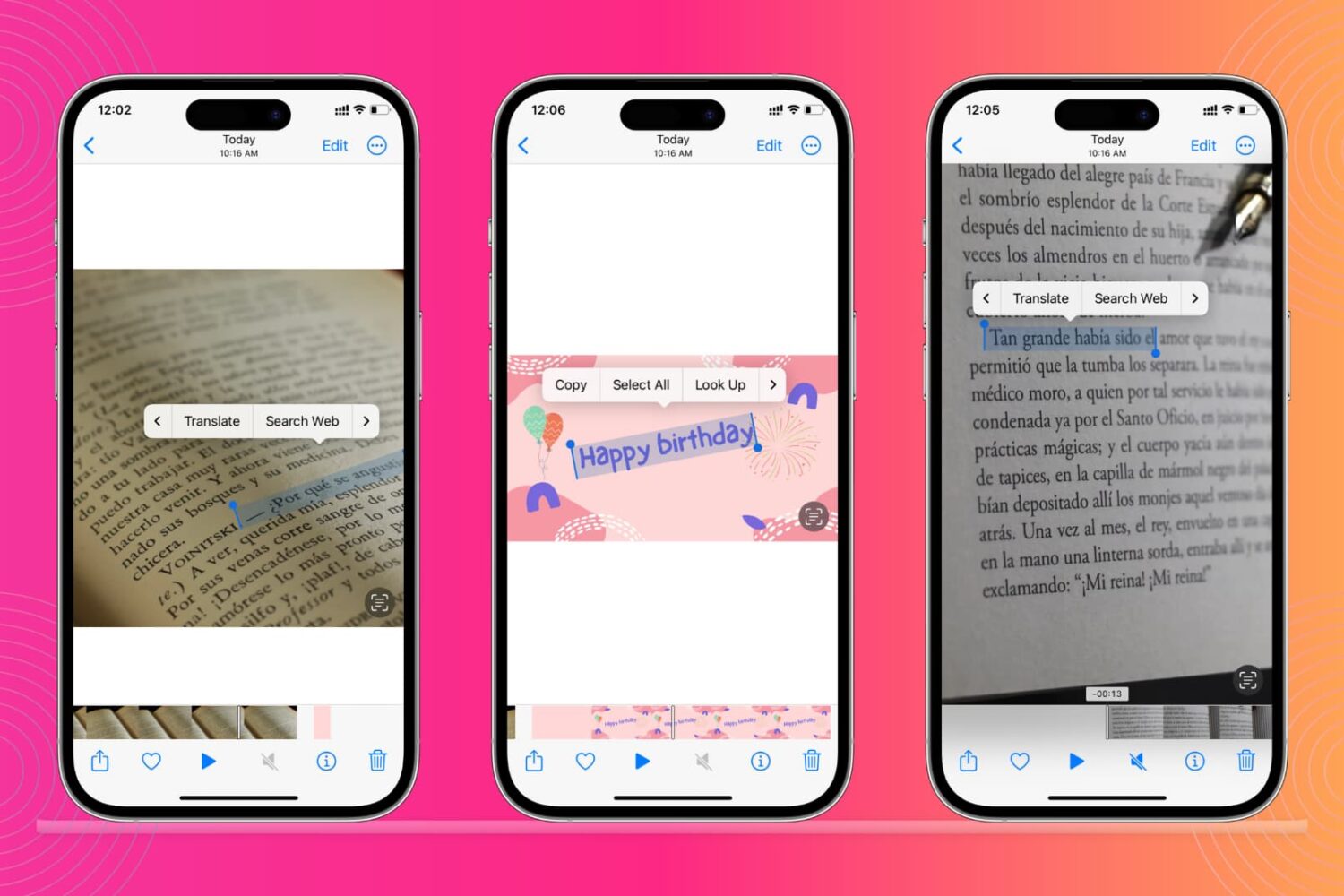

Live Text is one of those cool, but somewhat niche features in iOS 15 that lets users interact with text in an image like it was text in a document. The feature works in images, videos, and even while looking through the camera lens in real time.

This guide shows you how to recognize and extract text from images or videos using Live Text on your iPhone, iPad, and Mac.

Learn how to disable the Live Text feature on your iPhone, iPad, or Mac if you get distracted by the text selector when you’re working on an image or screenshot with text in it.