Apple’s decision to temporarily halt the Siri grading initiative in order to review privacy policies and safeguards has prompted rival Google and Amazon to suspend their own programs which permitted employees to listen to recorded voice assistant audio for quality control purposes.

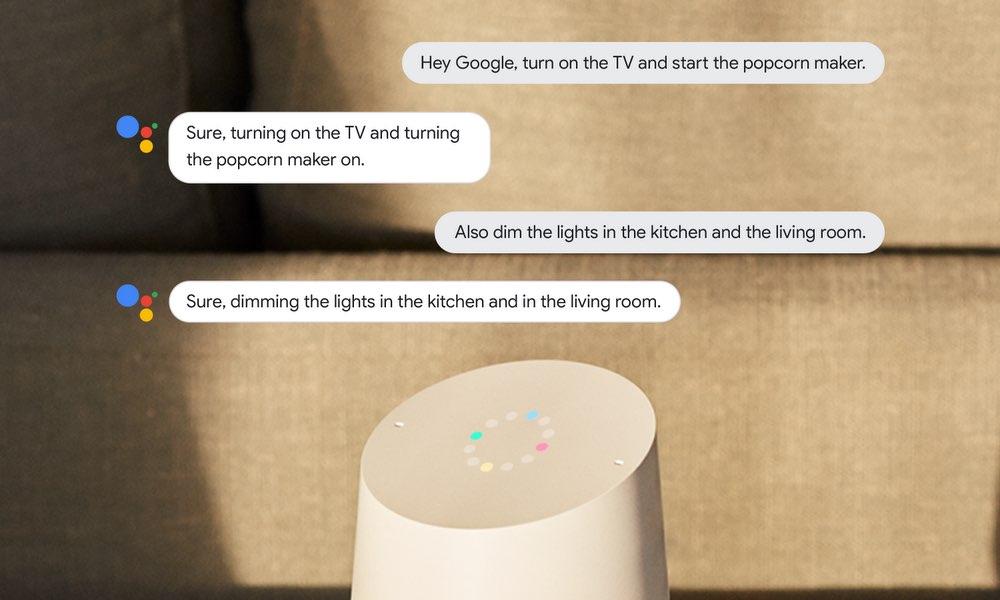

Wired reported in July that Google employs contractors tasked with listening to some audio recordings of customers’ interactions with the AI-powered Assistant to improve speech recognition. The report said some contractors were exposed to conversations that were not supposed to be heard because they were accidentally recorded by Google Home

One of the contractors provided about a thousand recordings to Belgian news organization VRT NWS, which claimed it was able to successfully identify persons heard in the audio clips. Many audio snippets, the story went on, included Assistant conversations “that should never have been recorded and during which the command ‘OK Google’ was clearly not given.”

Google in a statement to The Verge last Friday said it will pause “language reviews” in the European Union after Germany’s data protection commissioner called voice assistants “highly risk” and said that Google’s program was being investigated.

We are in touch with the Hamburg data protection authority and are assessing how we conduct audio reviews and help our users understand how data is used. These reviews help make voice recognition systems more inclusive of different accents and dialects across languages. We don’t associate audio clips with user accounts during the review process, and only perform reviews for around 0.2 percent of all clips.

The German regulator added in its statement that other assistant providers, such as Apple and Amazon, are “invited” to “swiftly review” their own assistant-grading policies.

In April of this year, a report from Bloomberg revealed that thousands of Amazon employees were listening to voice recordings captured by Echo, an industry practice when it comes to training machine learning algorithms that power these assistants so they could evolve over time. Some Amazon contractors reportedly had access to private information such as customers’ home addresses, before the company moved to restrict the level of access.

Stories which claim that Echo speakers are known to secretly recording family conversations didn’t help with Amazon’s effort either. According to a report from Bloomberg, the online retail giant as of last Friday allows Alexa owners to opt out of human review of their voice recordings via a newly-added option in the settings menu of the Alexa mobile app.

The British newspaper the Guardian reported on the Siri grading initiative last week.

Apple responded by suspending Siri’s grading globally. “We are committed to delivering a great Siri experience while protecting user privacy,” the company wrote in a statement. “While we conduct a thorough review, we are suspending Siri grading globally.”

It confirmed that an upcoming iOS software update will give customers new opt in/out controls (currently, you must turn off both Siri and Dictation to stop this data collection). According to the iPhone maker, about one percent of daily Siri requests were selected for the grading program. Siri audio snippets were anonymized before review — stripped of identifiable information like individual names, locations and Apple IDs, per Apple’s iOS Security Guide.

Siri recordings are retained for six months, after which the identifier is erased and the clip is saved “for use by Apple in improving and developing Siri for up to two years.”

I’m with Jim Dalrymple on this: I am certainly going to opt-in in order to help Apple improve Siri because I want to be part of the solution rather than be one of those people who are constantly complaining about Siri but don’t want to to anything to help Apple fix it.

And what’s your stance on this hot topic?

Let us know by leaving a comment below.