Artificial intelligence (AI) and machine learning (ML) research communities have been critical of Apple’s secretiveness to the point that it’s hurt the firm’s recruiting efforts and prompted it to change its tough stance against publicizing any internal AI findings. Last weekend, Apple finally published its very first AI paper, Forbes reported today.

Submitted for publication on November 15, the document outlines a technique for improving the training of an algorithm’s ability to recognize objects on images using computer-generated images rather than real-world ones.

The paper explains that synthetic image data is often “not realistic enough”, leading the network to learn details only present in synthetic images and fail to generalize well on real images. The company’s solution to that problem: Simulated+Unsupervised learning.

Simulated+Unsupervised learning relies on a new machine learning technique, called Generative Adversarial Networks, that increases the realism of a simulated image by basically pitting two neural networks against each other.

Machine learning experts often use synthetic rather than real-time images to train neural networks because synthetic image data is already labeled and annotated.

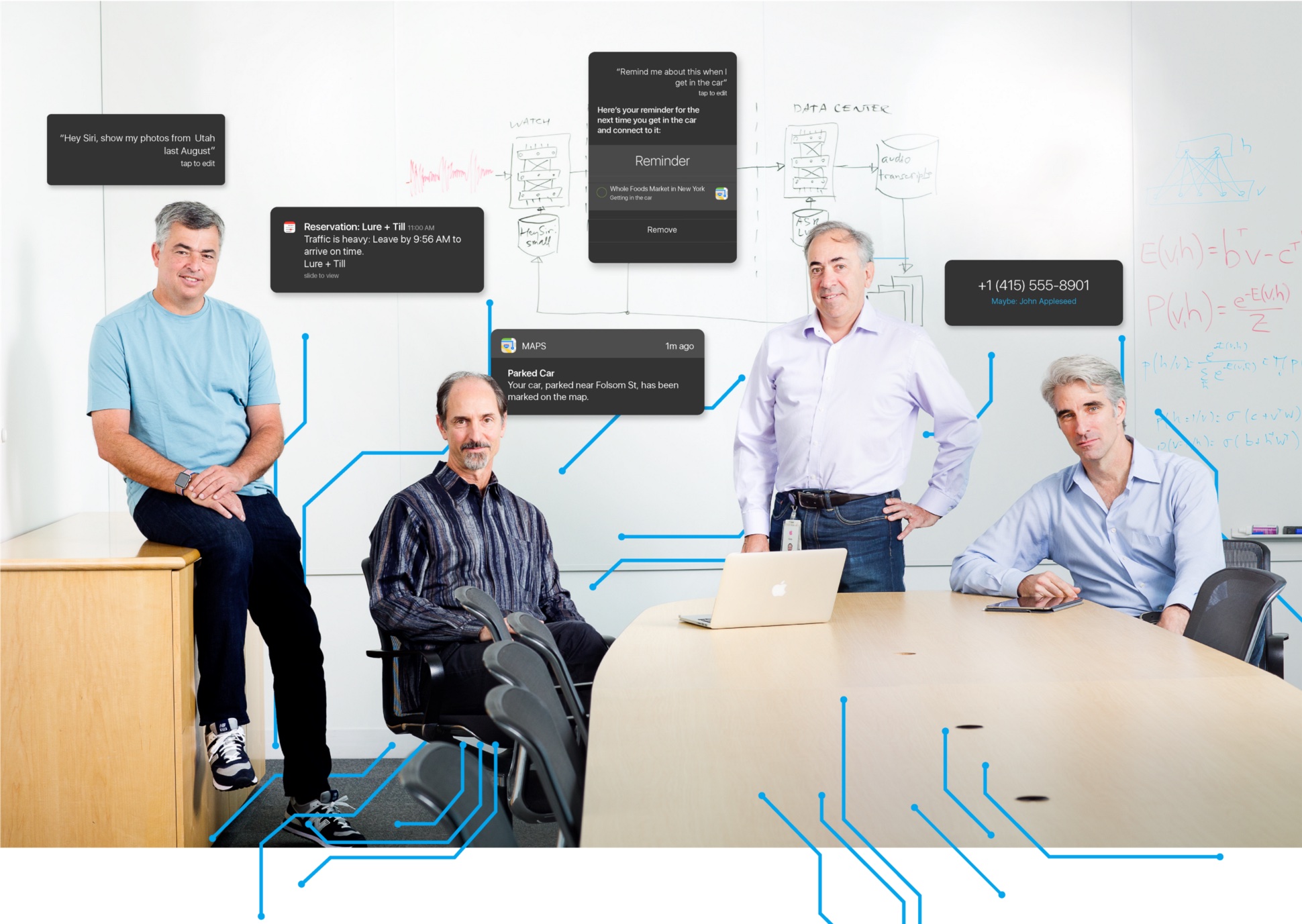

The paper was created by Apple researcher Ashish Shrivastava, who holds a PhD in computer vision from University of Maryland, College Park, and co-authored by engineers Tomas Pfister, Oncel Tuzel, Wenda Wang, Russ Webb and Josh Susskind, who co-founded the AI startup Emotient that Apple bought earlier this year.