With the ever-improving capabilities of smartphone cameras and so many great moments to capture on the go and share with your Instagram followers, browsing the hundreds – often times even thousands – of photographs in your iOS Camera Roll just to find that great shot of your significant other you’ve taken some time last year is starting to increasingly feel like looking for a needle in a haystack.

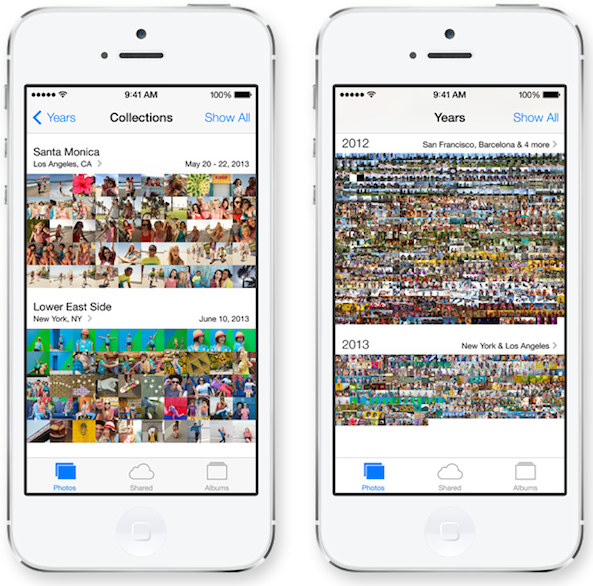

Sure, you can import iPhoto or Aperture albums or create your own ones right on your device. And yes, Apple’s improved the photo management capabilities of iPhone, iPod touch and iPad devices by introducing new features in iOS 7, such as the ability to automatically group your photographs based on time taken and location.

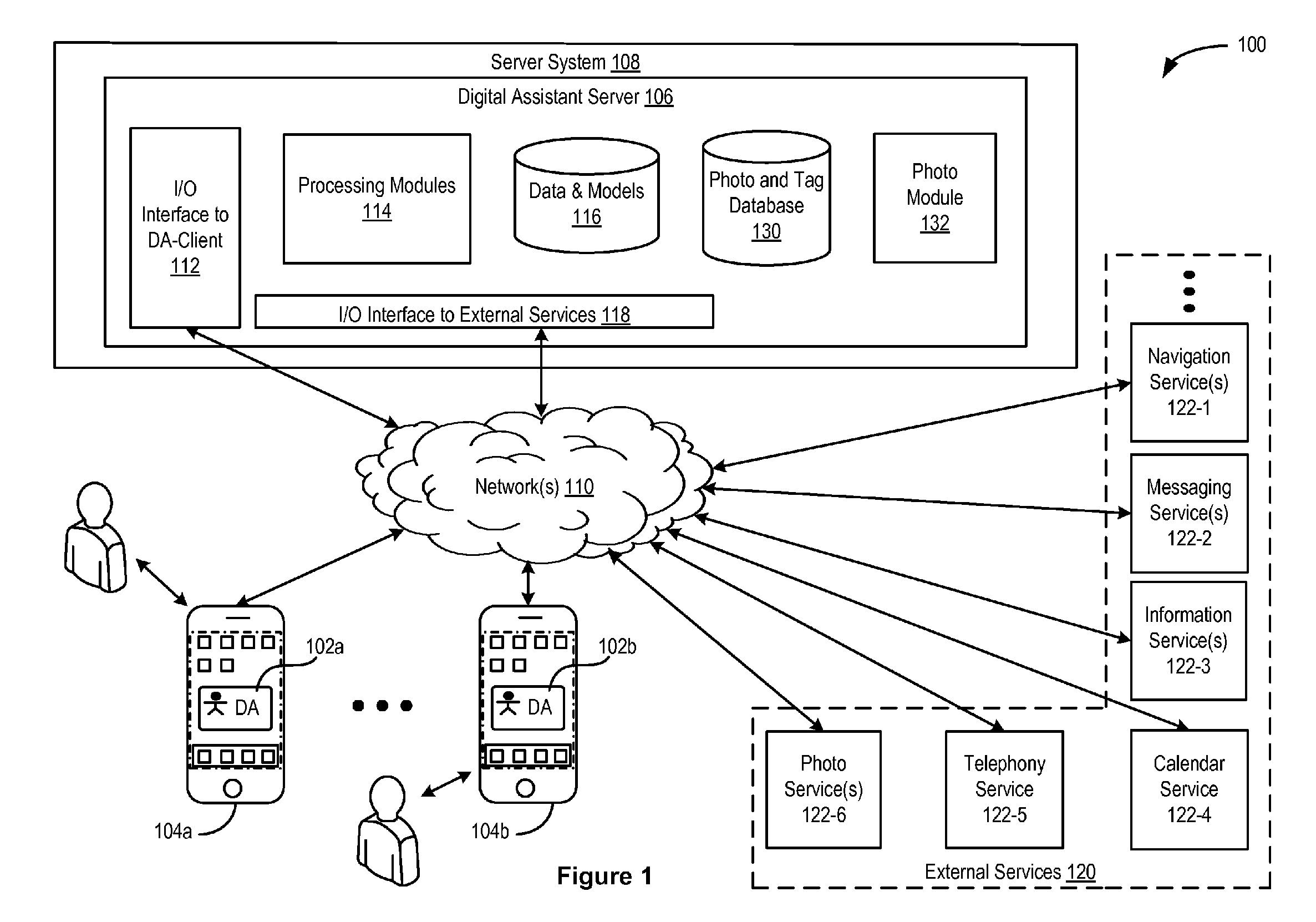

A new patent now reveals that Apple could tap Siri to let you sort through your photos – and even tag them – just by using your voice…

Apple notes that “while photo capture and digital image storage technology has improved substantially over the past decade, traditional approaches to photo-tagging can be non-intuitive, arduous, and time-consuming”.

Though photo-tagging has yet to make an appearance in Apple’s mobile operating system, the company’s patent application proposes that users tag photos with their voice, as opposed to manual tagging based on names of people or places.

You could tell Siri something along the lines of “This is me at the beach,” and the system would tag the corresponding shot accordingly.

The patent filed with the United States Patent and Trademark Office on Thursday, discovered by AppleInsider, for ‘Voice-Based Image Tagging and Searching’ notes that the system could also tap the underlying GPS location and time taken to automatically tag your snaps.

The filing reads:

Once a photograph is tagged using the disclosed tagging techniques, other photographs that are similar may be automatically tagged with the same or similar information, thus obviating the need to tag every similar photograph individually.

AppleInsider offers other examples:

Apple’s system could even recognize faces, buildings or landscapes to tag similar photos. For example, by a user telling Siri that they are captured in a photograph, the system could then intelligently tag other photos that capture the user’s face.

You could then simply ask Siri to “Show me photos of me at the beach” or “Show me photos from Hawaii taken in 2012” to filter the relevant shots, even without the need for tagging faces.

Though Apple’s invention specifically mentions only such photo strings as an entity, an activity or a location, the system could be theoretically expanded to permit Siri to analyze information contained within each photo’s EXIF tags, something the current iOS Photos/Siri implementation doesn’t cover.

These specific metadata tags are created automatically when a picture is taken, but there are tools to edit specific EXIF tags to your liking, such as Exif Wizard and Exif-fi App Store apps or the PhotoExif tweak for jailbroken devices.

EXIF tags contain a wealth of information pertaining to the source camera’s manufacturer and model, aperture and exposure, image resolution, color depth, focal length, flash characteristics and so forth.

On OS X, basic EXIF information can be viewed in the Finder by right-clicking the image file, choosing Get Info and expanding the More Info section.

Are you excited about this Apple patent?