A highly interesting technical article published October 1 on Apple's Machine Learning Journal blog has gone unnoticed, until today.

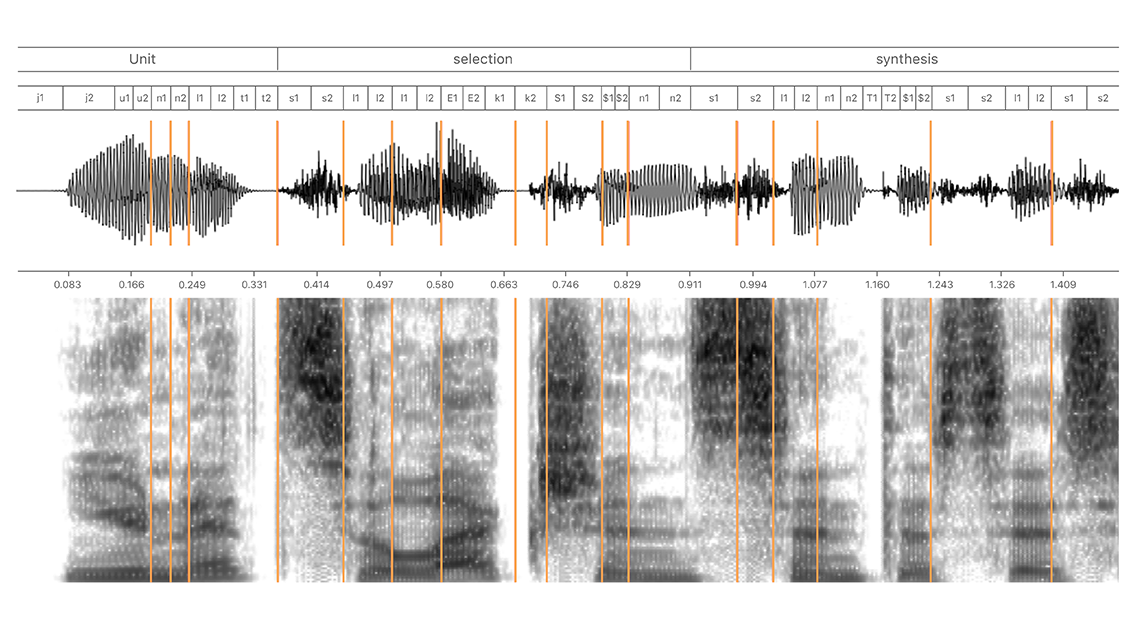

New machine learning article from Apple goes in depth on how “Hey Siri” does its magic

A highly interesting technical article published October 1 on Apple's Machine Learning Journal blog has gone unnoticed, until today.

Apple on Wednesday published three new articles detailing the deep learning techniques used for the creation of Siri's new synthetic voices. The write-ups also cover other machine learning topics it'll be sharing later this week at the Interspeech 2017 conference in Stockholm, Sweden.

Hosted at machinelearning.apple.com, Apple's new blog called “Machine Learning Journal” is dedicated to sharing technical details of its machine learning research with the AI community.

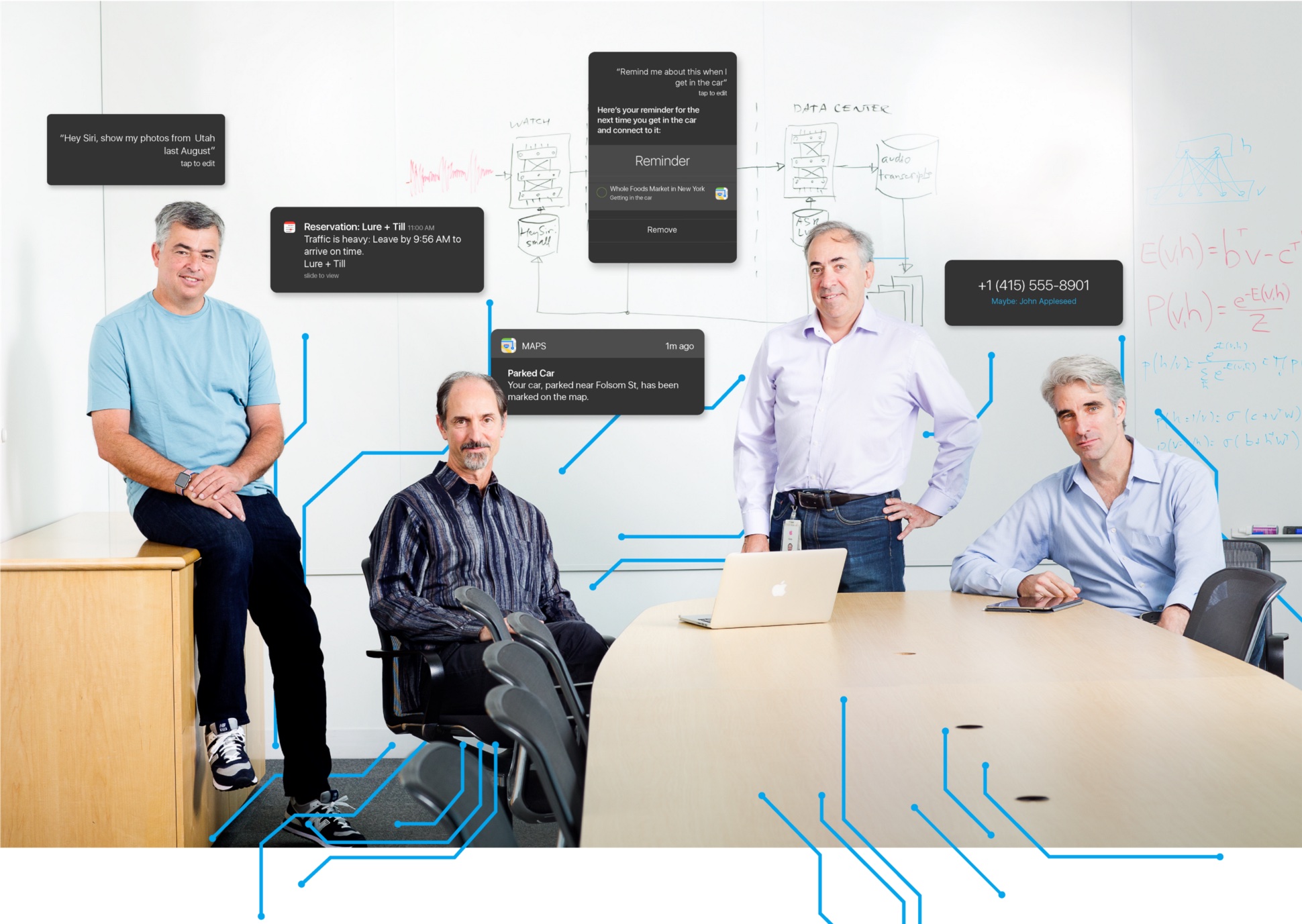

Apple is working on a processor dedicated specifically to AI-related tasks, reports Bloomberg. Citing sources familiar with the matter, the outlet says the chip is known internally as the Apple Neural Engine, and its goal is to improve the way devices handle facial recognition, speech recondition and other AI-related tasks.

Currently, Apple devices handle complex AI processes with two different chips—the main processor and the GPU. This new chip would let Apple offload those tasks onto a dedicated module designed specifically for complex artificial intelligence processing, resulting in better battery life and performance.

The Apple AI chip is designed to make significant improvements to Apple’s hardware over time, and the company plans to eventually integrate the chip into many of its devices, including the iPhone and iPad, according to the person with knowledge of the matter. Apple has tested prototypes of future iPhones with the chip, the person said, adding that it’s unclear if the component will be ready this year.

It's no surprise Apple looking to move quickly with this AI chip. Other manufacturers, such as Google and Qualcomm, are already using dedicated AI modules, and artificial intelligence sits at the heart of two major spaces the company is rumored to be interested in: self-driving vehicles and augmented reality.

Source: Bloomberg

Secure instant messaging service Telegram today launched voice calls in its desktop app for Mac, Windows and Linux nearly two months after implementing the voice-calling feature in Telegram Messenger for iPhone and iPad.

To make sure Telegram calls are the best in terms of quality, speed and security, the app uses artificial intelligence to update its neural network after each call about things such as network speed, ping times, packet loss percentage and other factors that influence the quality of your VoIP calls.

Based on gathered data, the app optimizes dozens of parameters to improve the quality of future calls on the given device and network. By default, Telegram calls are lightweight.

https://twitter.com/telegram/status/864543129847955457

If there's a change in your connection during the call, the app will make necessary adjustments.

For instance, Telegram may boost your sound quality on stable Wi-Fi connection or use less data if your Wi-Fi or cellular coverage is spotty at best.

Whenever possible, your calls will go over a peer-to-peer connection using the best audio codecs to save traffic while providing “crystal-clear quality.” When a peer-to-peer connection cannot be established, the app will use the closest server to you.

Telegram has its own distributed infrastructure all over the world to ensure the fastest possible delivery of your texts and seamless voice calling experience. As mentioned, VoIP calls on Telegram use end-to-end encryption, just like the app's Secret Chats feature, to prevent eavesdropping.

For voice calls, however, they've improved the key exchange mechanism. “To make sure your call is 100 percent secure, you and your recipient just need to compare four emoji”, said the team.

Bottom line: the quality of Telegram calls will further improve as you and others use them, thanks to the built-in machine learning. And with group calling, video calling and screen sharing apparently on the team's to-do list, Telegram is bound to become a capable Skype alternative.

As soon as VoIP calls are enabled for your country, a phone icon will appear on every profile page in Telegram Desktop.

Telegram for iOS is available free via App Store.

Telegram Desktop can be downloaded from Mac App Store or through the official website.

Apple has snapped up an artificial intelligence and machine learning startup, called Lattice Data, for a reported $200 million. They've built an inference engine which turns so-called dark data into structured data sets that can be analyzed easily. Dark data is data stored in computer networks that cannot be analyzed directly because it's not in a proper format.

The acquisition is valued in the ballpark of $200 million.

The deal could bolster Apple's AI efforts and help its software turn things like text and images into structured items that can then be analyzed in traditional manners to derive insights. Apple has confirmed the acquisition with its standard boilerplate message issued to TechCrunch, saying it buys smaller technology companies from time to time.

Apple and Lattice did not immediately return a request for comment.

About 20 engineers from Lattice have now joined Apple. A source said that Lattice had been “talking to other tech companies about enhancing their AI assistants,” including Amazon’s Alexa and Samsung’s Bixby.

As per the story, which cited an anonymous source, the deal closed a few weeks ago.

The Menlo Park, California headquartered startup was co-founded in 2015 by Christopher Ré, Michael Cafarella, Raphael Hoffmann and Feng Niu as the commercialization of DeepDive, a system created at Stanford to extract value from dark data.

https://www.youtube.com/watch?v=A_E0CPu-SeU

Company CEO is Andy Jacques, a seasoned enterprise executive who joined last year.

“Lattice turns dark data into structured data with human-caliber quality at machine-caliber scale,” according to the official Lattice website. “We model the known as features and the unknown as random variables connected in a factor graph.”

Lattice's DeepDive framework has been used successfully in a diverse set of projects, ranging from a DARPA-funded human trafficking program to geology and paleontology to medical genetics, pharmacogenomics and more.

According to the website:

Data quality is in the DNA of Lattice. Our goal is not just to match human-level quality, but also to do so at unprecedented speed and scale. We build systems that win competitions and outperform expert readers.

We continuously push the envelope on machine learning speed and scale with our bleeding-edge systems research. For years, we have been building systems and applications that involve billions of webpages, thousands of machines and terabytes of data.

We can only speculate as to how Apple plans to apply Lattice's technology to its products.

It's probably safe to assume that Apple could improve object and scene recognition across its Photos service and the accompanying apps. More important than that, Lattice technology could be used to realize iPhone 8's rumored camera augmented reality features while giving Siri the ability to analyze text and images in Messages.

A recent patent application suggested potential Siri integrations with the iMessage platform. Aside from Messenger-like chatbot functionality for Siri in Messages, Apple's invention could let users, say, ask Siri to send an image of a Volkswagen Beetle to a contact.

Lattice's framework could also help enhance Apple's neural networks and machine learning.

That's because unlike traditional machine learning, Lattice does not require laborious manual annotations. In taking advantage of domain knowledge and existing structured data to bootstrap learning via distant supervision, Lattice solves data problems with data.

Apple's HealthKit, ResearchKit and CareKit frameworks may benefit from Lattice tech, too.

Amazon's Echo will soon get some real competition as Google gears up to launch its Home smart connected speaker in the United Kingdom this spring. According to Rick Osterloh, Google's Vice President of Hardware, Home's “artificial intelligence skills and vast data” will give it the edge over Amazon's voice-activated wireless speaker.

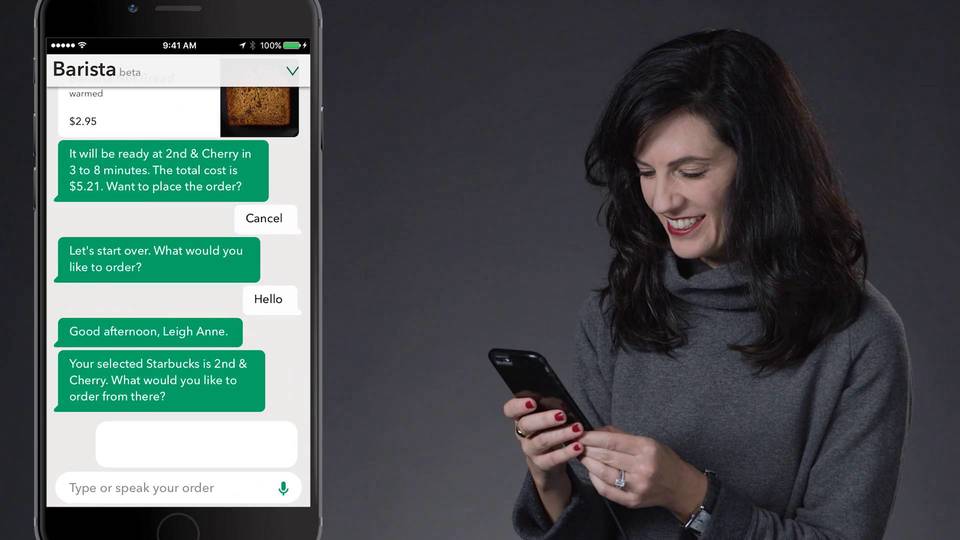

You'll soon be able to order your favorite latte by using your voice as Starbucks today kicked off a limited beta test of a new artificial intelligence assistant inside its mobile app for iPhone. Aptly named My Starbucks Barista, this new feature will let you order items by conversing with the virtual barista within a new messaging UI, the firm said Monday.

Apple has officially joined the non-profit organization Partnership on AI as a founding member alongside other technology giants like Amazon, Facebook, Google, IBM and Microsoft. Apple has been involved and collaborating with the Partnership on AI since before it was first announced last year and is “thrilled” to formalize its membership, the organization said Friday.

According to Bloomberg, joining the non-profit is the latest sign that Apple is opening up more and becoming less secretive when it comes to artificial intelligence.

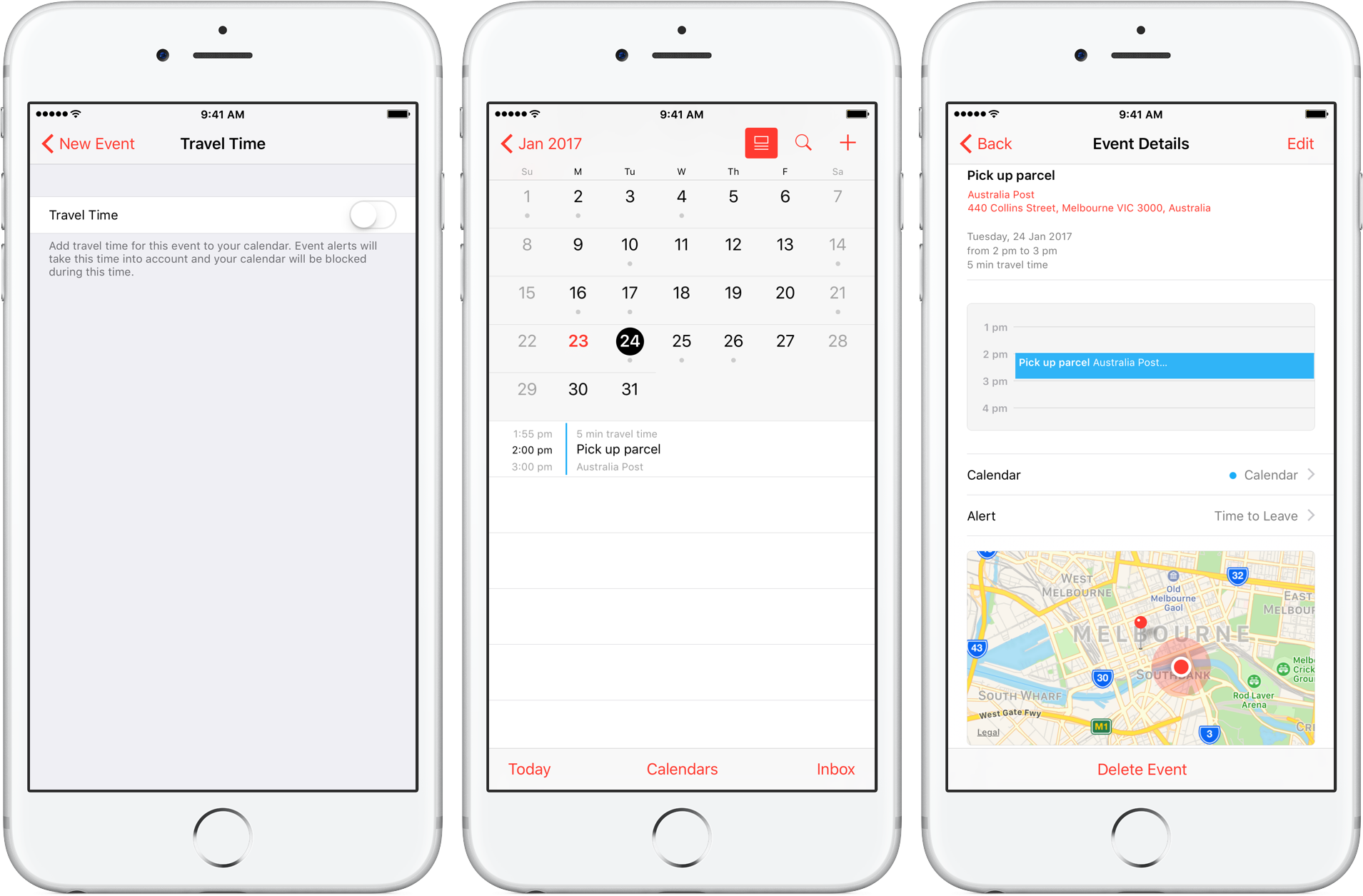

Travel Time is a nifty addition to Apple’s Calendar app, capable of precisely estimating the duration of your upcoming trip based on parameters such as milage and traffic. Used properly, it can notably ease some of your daily scheduling woes, but paradoxically, a large contingent of regular Calendar users still routinely overlook the feature.

Formerly introduced as frequent locations and traffic conditions widgets, the service has only slowly gained traction amongst users. Travel Time today however has come of age and is now neatly integrated into one of the most popular productivity applications both on iOS and macOS. So if you didn’t get the memo on the virtues of Travel Time in Calendar, here’s what you need to know.

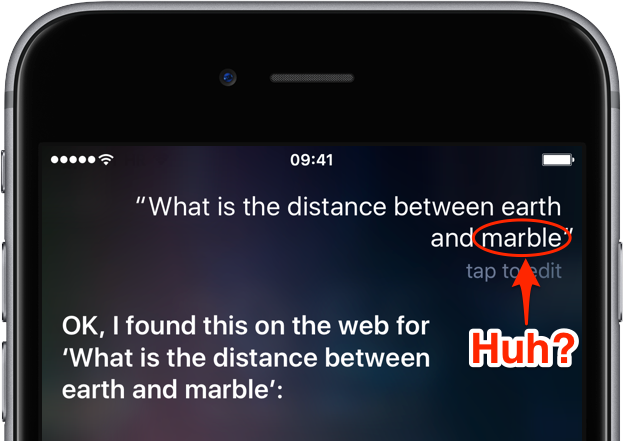

You may be aware that there is already a feature in iOS that sort of lets you type in your questions to Siri instead of using voice commands. It's quite handy for those situations when talking aloud isn't an option or Siri fails to recognize repeatedly what you said. Starting with iOS 10, Siri includes a “Maybe You Said” feature.

Taking advantage of machine learning and artificial intelligence, it suggests corrections for mispronunciations or incorrectly recognized queries. In this post, you'll learn how to leverage this feature to avoid having to manually correct any mispronounced words.