In a new research note issued to clients this morning, respected Apple analyst Ming-Chi Kuo has expressed his belief that the Cupertino technology firm won’t integrate an infrared depth-sensing system on the rear of new iPhones due in 2019, citing two reasons.

Firstly, the current dual-camera setup is sufficiently powerful in capturing depth information to realize features like Portrait shooting mode. And secondly, an infrared system like the TrueDepth module in the notch of the current iPhone X family still wouldn’t offer enough distance and depth information to make richer augmented reality experiences a reality.

By leveraging the offset of their two cameras, current dual-lens iPhones generate so-called disparity maps that are needed for features such as Portrait Mode and augmented reality.

“We believe that iPhone’s dual-camera can simulate and offer enough distance/depth information necessary for photo-taking; it is therefore unnecessary for the 2H19 new iPhone models to be equipped with a rear-side ToF,” the analyst wrote in his research note to clients this morning, a copy of which was obtained by MacRumors.

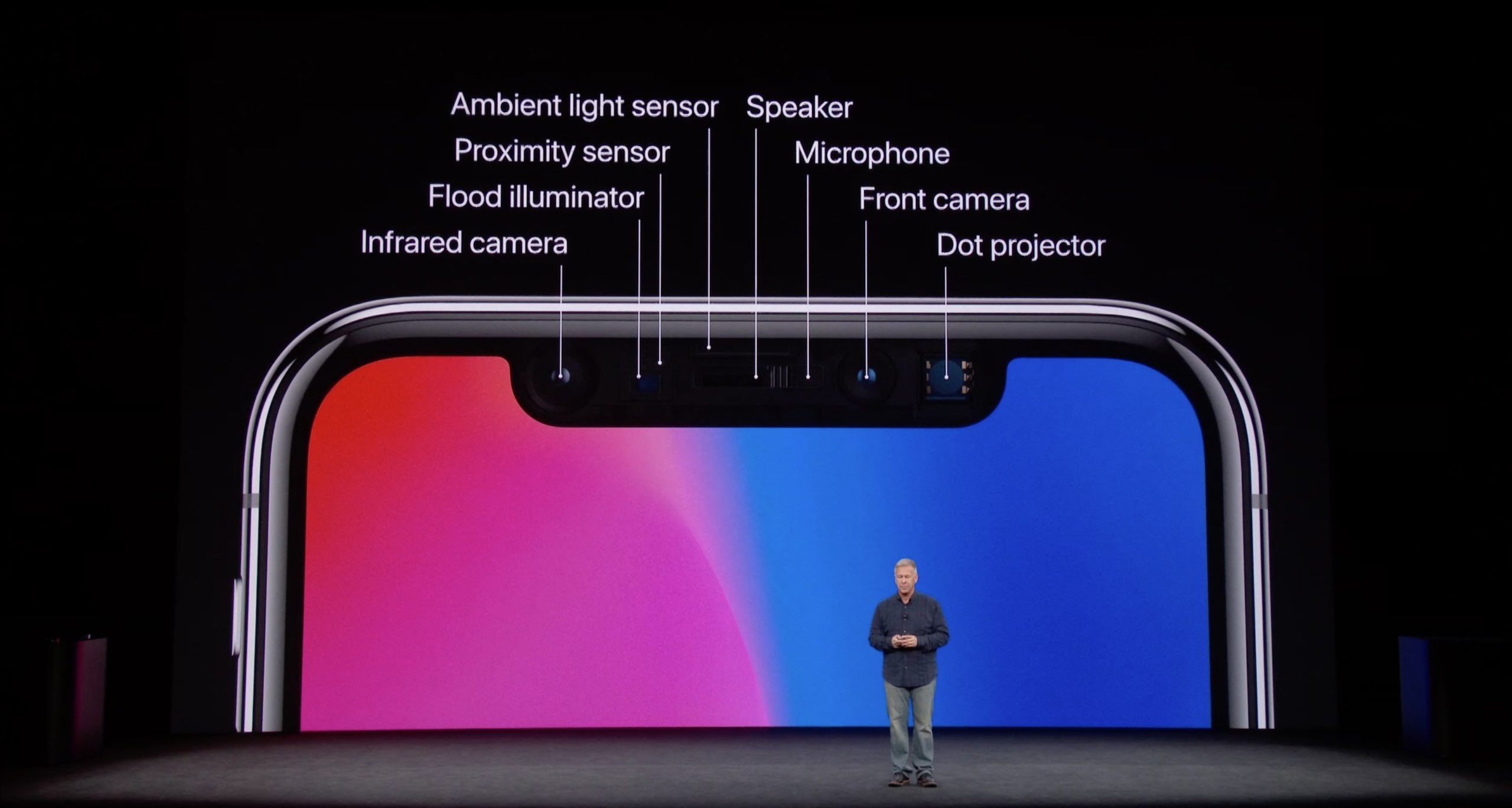

iPhone X’s TrueDepth uses a vertical-cavity surface-emitting laser (VCSEL) module that takes advantage of a so-called structured light approach. An infrared emitter sprays a pattern of 30,000 thousand infrared dots (invisible to the human eye) and measures their distortion to calculate a disparity map (the farther the dot, the more it distorts).

A TOF system calculates the time it takes pulses of light to travel to and from a target.

While ToF does not improve basic photo-taking functionality, it yields way more accurate and higher-resolution disparity maps than the structured light technique. ToF can capture depth maps of objects that are farther away, unlike the front-facing TrueDepth camera on the iPhone X family which cannot capture depth data reliably from more than a few inches away.

In short, while the ToF technology is good enough to simulate depth-of-field photography and provide the basis for basic augmented reality experiences, it is not yet ready for the kind of augmented reality revolution Apple is rumored to be working on.

In Kuo’s view, the “revolutionary augmented reality experience” that the Cupertino technology giant is ultimately aiming to develop would require a sophisticated ToF camera system working in conjunction with a “more powerful Apple Map database,” 5G connectivity and the company’s rumored augmented-reality glasses.

A headset would overlay computer images on top of your real world.

The rumored headset accessory would poll a ToF system to calculate depth maps over shorter distances while tapping loading previously created depth maps on demand using an enhanced Maps backend and a low-latency 5G connection.

In that light, an enhanced Maps with augmented reality features should bear fruit when an Apple-branded headset makes its appearance around 2020. According to Kuo, augmented reality Apple Maps will be a killer app for Apple’s next-generation augmented reality experience.

Thoughts?