Bloomberg reported this morning that Apple is working on a rear 3D sensor different from the TrueDepth camera that would improve augmented reality features and depth mapping.

The TrueDepth camera builds a three-dimensional mesh of a user’s face by projecting a pattern of 30,000 infrared dots onto it. Taking advantage of a structured-light approach, the system then measures the distortion of each dot to build a 3D image for authentication.

The planned rear-facing sensor for 2019 iPhones is said to take advantage of a technique known as time-of-flight which calculates the time it takes for an infrared light beam to bounce off surrounding objects in order to create a three-dimensional picture of the environment.

“The company is expected to keep the TrueDepth system, so future iPhones will have both front and rear-facing 3-D sensing capabilities,” added the report.

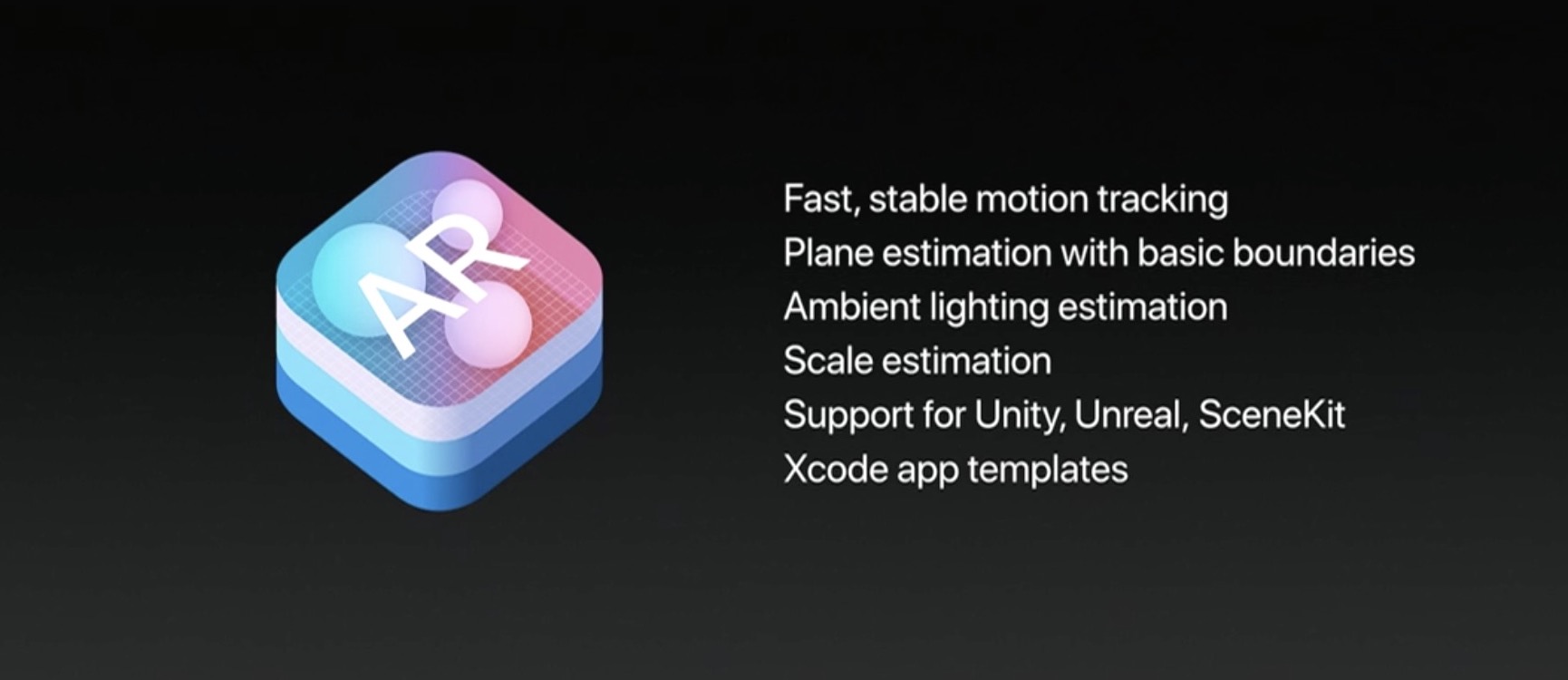

A rear sensor would enable more sophisticated augmented reality apps, accurate depth mapping and more reliable tracking. ARKit currently fuses raw camera feed and motion data from onboard sensors to detect flat surfaces and track virtual objects overlaid onto the real world, but it struggles with vertical planes such as walls or windows.

As an example, if a digital tiger walks behind a real chair, the chair is still displayed behind the animal, which destroys the illusion. A rear 3D sensor would remedy that.

“While the structured light approach requires lasers to be positioned very precisely, the time-of-flight technology instead relies on a more advanced image sensor,” wrote authors Alex Webb and Yuji Nakamura. “That may make time-of-flight systems easier to assemble in high volume.”

Apple’s allegedly started discussions with a few suppliers who’d build the new sensor, including Infineon, Sony, STMicroelectronics and Panasonic. Interestingly, Google’s been working with Infineon on depth mapping as part of its Project Tango unveiled in 2014.

Should future iPhones have both front and rear-facing 3D sensing capabilities?

Sound off in the comments!