Remember iPhone location tracking? That was child’s play. Should Apple have its way, future versions of its iOS mobile operating system might record every event you ever perform on your iPhone, iPod or iPad, creating a uniquely detailed footprint of your interactions with iOS devices. It would make geotagging a system-wide feature.

Every gesture invoked and input event registered could get timestamped and stored. Every phone call, every keystroke, every tweet, like, comment, game achievement, song played, you name it – basically any set of device data might get logged automatically.

But don’t get all worked up about the privacy implications: good ol’ Apple isn’t up to nefarious intents. Matter of fact, the goal with this invention is a noble one: provide a searchable, actionable lifestream of sorts to make your mobile computing easier, more meaningful and tailored for you.

So how does the Cupertino firm plan to pull it off? Simple: by way of using a journaling file system that’s already present in OS X…

Enter Apple’s U.S. Patent No. 8,316,046, titled “Journaling on mobile devices” and awarded by The U.S. Patent and Trademark Office on Tuesday.

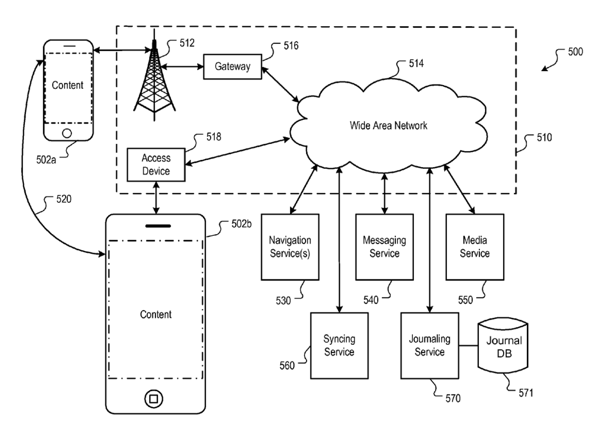

The filing credits Apple engineers Ronald K. Huang and Patrick Piemonte as inventors and describes a journaling subsystem on a mobile device which stores event data related to apps or other subsystems running on the mobile device.

The idea isn’t new and appears to borrow from the work of Dominic Giampaolo, who invented the BeOS file system BFS and now works at Apple. Implementing such a file system on a mobile device that’s always on you could unleash a realm of possibilities.

The event data can be stored and indexed in a journal database so that a timeline of past events can be reconstructed in response to search queries.

When journaling is active, the system collects all event data and stores it in the central journal database, updating database index on a regular basis.

Upon receiving a search query, iOS simply aggregates event data based on the query and index, generating search results from the aggregated event data.

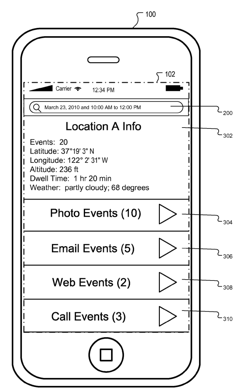

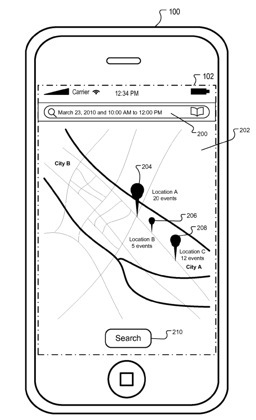

In some implementations, a timeline can be reconstructed with markers on a map display based on search results.

The drawing above illustrates that workflow.

“When the user interacts with a marker on the map display, the event data collected by the mobile device is made available to the user”, Apple writes.

The same goes for chats, email messages and so forth.

At the most basic level, say, one could type in “October 1, 2012″ and “10:00am to 12:00pm” and “email” to quickly filter only the emails for the specified period.

The system-wide logging could be plugged into Siri – imagine being able to ask questions like “Siri, what was I doing between yesterday 2pm and 5pm?” or “who have I been texting most this month?”

And by tapping the Related People field in Contacts (that’s how you tell iOS/Siri who your wife is and let Apple build your social graph) and leveraging iCloud, Apple could tell you the time and place your wife made a purchase with her Passbook.

That’s just scratching the surface.

I’m scatter-shooting here, but here’s a thing: by letting Apple log our life via the iPhone – or, at the very least, log everything we do on our beloved devices – and upload that data to iCloud for heavy-duty number crunching and analysis, a Google Now-like service becomes a real possibility.

In layman’s terms, systematically gathering intelligence on every possible thing you do with your iPhone theoretically helps Apple a great deal figuring out your personal habits like eating, sleeping, surfing, emailing, entertainment and what not.

And all of this then can be used to deliver precisely the right pieces of information, when you need them, automatically, without you even realizing you needed it in the first place.

Now Uncle Sam can know when and where you played Angry Birds.

How spooky is that?

Is Big Brother coming to get you?