Apple on Wednesday published three new articles detailing the deep learning techniques used for the creation of Siri’s new synthetic voices. The write-ups also cover other machine learning topics it’ll be sharing later this week at the Interspeech 2017 conference in Stockholm, Sweden.

The following new articles from the Siri team are now available:

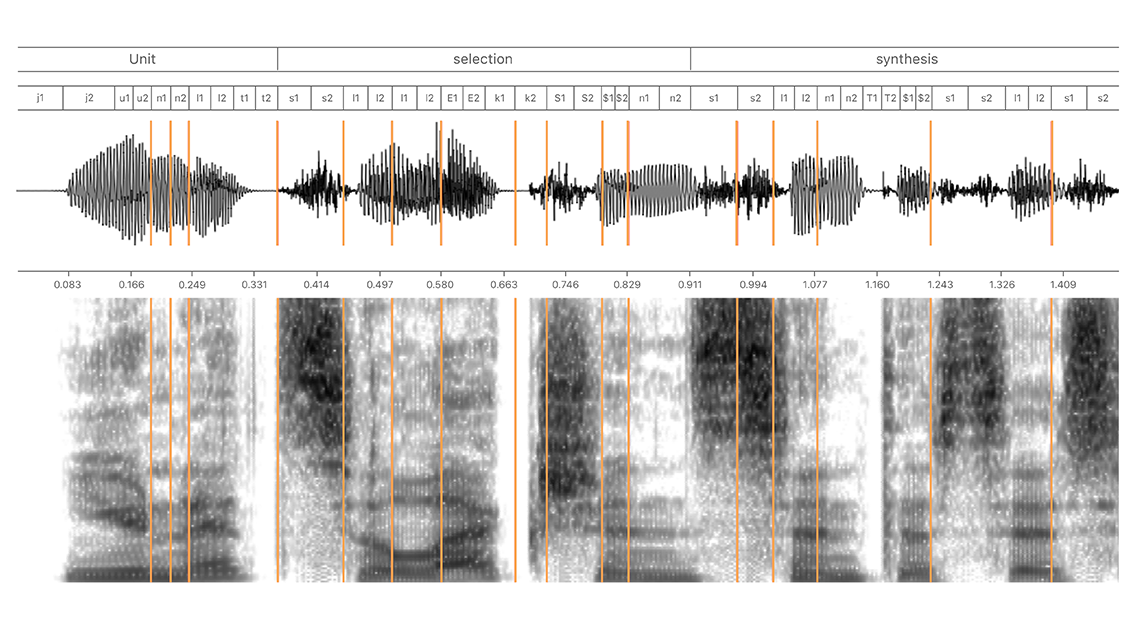

- Deep Learning for Siri’s Voice—details how on-device deep mixture density networks are used for hybrid unit selection synthesis

- Inverse Text Normalization—approached from a labeling perspective

- Improving Neural Network Acoustic Models—by taking advantage of cross-bandwidth and cross-lingual initialization, if you know what I mean

If you have trouble grasping the technicalities or even understanding the highly technical nature of the language used in the latest write-ups, you’re not alone.

I have no problem diving deep into Apple’s complex documentation for developers and other specialized documentation, but I feel downright stupid just reading those detailed explainers.

Among other improvements, iOS 11 delivers more intelligence and a new voice for Siri.

No longer does Apple’s personal assistant use phrases and words recorded by voice actors in order to construct sentences and its responses. Instead, Siri on iOS 11 (and other platforms) adopts programmatically created male and female voices. That’s a much harder voice synthesis technique, but it allows for some really cool creative possibilities.

For instance, the new Siri voices take advantage of on-device machine learning and artificial intelligence to adjust intonation, pitch, emphasis and tempo while speaking, in real time, taking context of the conversation into account. Apple’s article titled “Deep Learning for Siri’s Voice” details the various deep learning techniques behind iOS 11’s Siri voice improvements.

According to the opening paragraph:

Siri is a personal assistant that communicates using speech synthesis. Starting in iOS 10 and continuing with new features in iOS 11, we base Siri voices on deep learning. The resulting voices are more natural, smoother, and allow Siri’s personality to shine through.

The new write-ups were published on the official Apple Machine Learning Journal blog, established a few weeks ago to cover the company’s efforts in the field of machine learning, artificial intelligence and related research.

Apple went ahead with the blog following criticism that it couldn’t hire the brightest minds in artificial intelligence and machine learning because it wouldn’t let them publish their works.

The inaugural post, titled “Improving the Realism of Synthetic Images”, was published in July. The in-depth article outlines a new method for improving the realism of synthetic images from a simulator using unlabeled real data while preserving the annotation information.