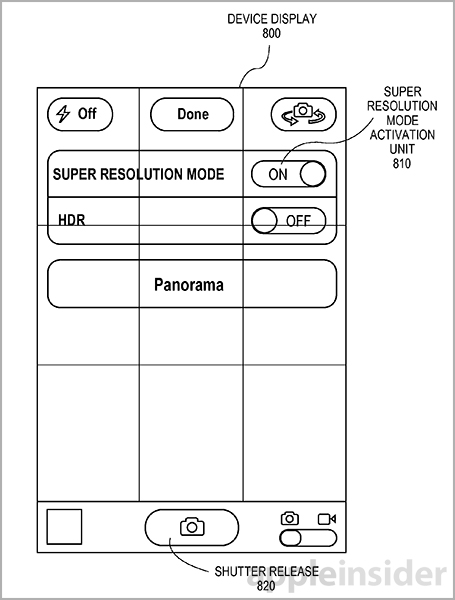

If Apple’s patent filing with the United States Patent & Trademark Office (USPTO) is anything to go by, future iOS devices could appeal further to iPhoneography fans by allowing them to take very detailed snaps using a fancily named ‘super-resolution’ imaging.

According to the filing, this could be achieved by using optical image stabilization – a feature that must be implemented in the camera hardware itself – to capture multiple images in a burst, each at a slightly offset angle.

The software would take it from there and stitch individual image data together to form a single super-resolution image, bypassing the need for more megapixels…

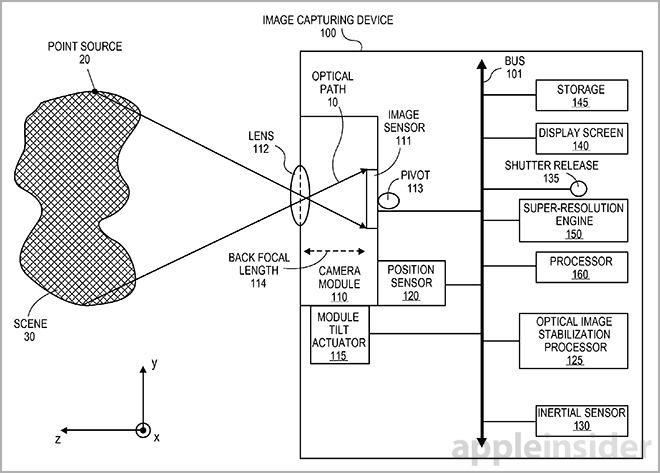

The USPTO patent filing 20140125825, entitled ‘Super-resolution based on optical image stabilization’, outlines a system for creating very detailed images using an image capturing device such as the iPhone.

Optical image stabilization works by moving the camera sensor as the final element in the optical path, using precision crafted actuators. This permits the camera hardware to stabilize the image projected on the sensor before the sensor itself converts light into image data.

In one embodiment, an electronic image sensor captures a reference optical sample through an optical path. Thereafter, an optical image stabilization (OIS) processor to adjusts the optical path to the electronic image sensor by a known amount.

A second optical sample is then captured along the adjusted optical path, such that the second optical sample is offset from the first optical sample by no more than a sub-pixel offset.

Unlike optical image stabilization which must be implemented in the lens, iOS devices currently use software-based image stabilization. Also known as Electronic Image Stabilization, this takes a few (four) photos in a rapid succession and then combines them to reduce blurring and other artifacts appearing when the device shakes during capture.

As AppleInsider explains:

Physical modes of stabilization usually produce higher quality images compared to software-based solutions. Whereas digital stabilization techniques compensate for shake by pulling from pixels outside of an image’s border or running a photo through complex matching algorithms, OIS physically moves camera components.

While taking the successive image samples, a highly precise actuator tilts the camera module in sub-pixel shifts along the optical path — across the horizon plane or picture plane.

In some embodiments, multiple actuators can be dedicated to shift both pitch and yaw simultaneously. Alternatively, the OIS systems can translate lens elements above the imaging module.

It’s worth pointing out that capturing multiple photos in a burst and combining them into a super-resolution photo requires a lot of horse power. Therefore, Apple has clearly envisioned this feature with its in-house designed 64-bit A7 processor in mind.

The A7 has a much improved on-board image processor, a feature originally introduced in the A5 chip. Its power clearly shows when taking ten full-resolution images per second in the burst mode on the iPhone 5s.

As the iPhone 5/5c lacks hardware-accelerated burst mode, the handset can take just a few images per second, at best.

Since the iPhone 4s, Apple has largely ignored the megapixel race. Having instead opted to advance iPhone imaging by improving upon the existing eight-megapixel sensor, Apple added camera features such as a wider aperture, an enhanced lens subsystem, more sophisticated CMOS sensor, bigger camera pixels, advanced software-based post processing and what not.

The vast majority of rumors seem to point to the next iPhone retaining the eight-megapixel sensor. Should the rumors pan out, the iPhone 6’s camera will have a wider f/1.8 aperture and use software-based image stabilization to enhance your snaps.

According to ESM China analyst Sun Chang Xu, the handset will have larger CMOS pixels measuring 1.75 micrometers each to capture more light, just like on the four-year-old iPhone 4.

By comparison, the iPhone 5s’s camera has a pixel size of 1.5 micrometers.