In this tutorial, we will show you how to transcribe a voice memo into text without using third-party apps or services.

How to transcribe voice memos on iPhone, iPad, and Mac

In this tutorial, we will show you how to transcribe a voice memo into text without using third-party apps or services.

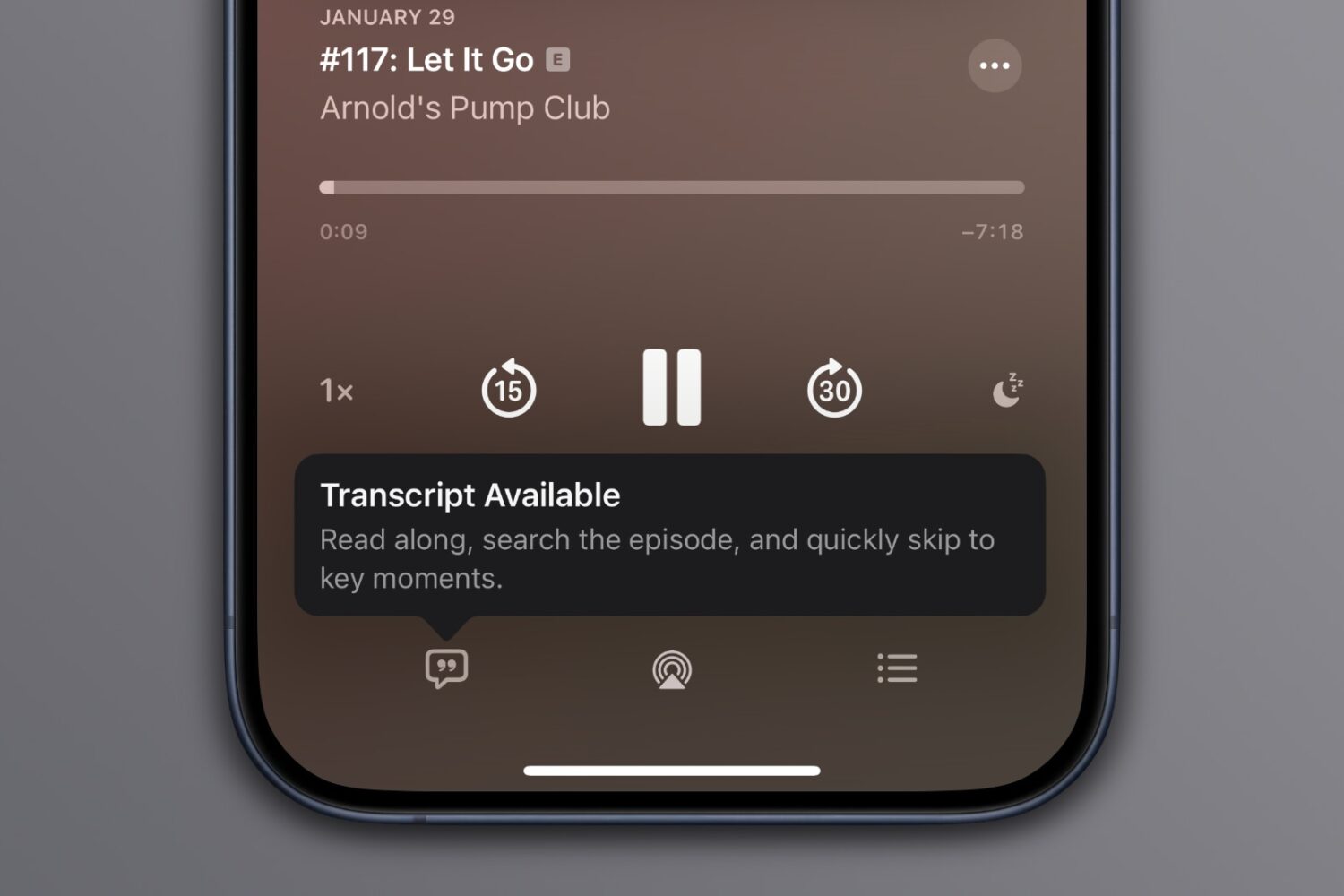

Automatic transcripts of shows in the Apple Podcasts app on iPhone is a new feature in iOS 17.4 that provides an experience similar to time-synced lyrics on Apple Music.

If you’ve ever been in a situation where you had to continue working or writing on Mac when you were unable to type, what did you do?

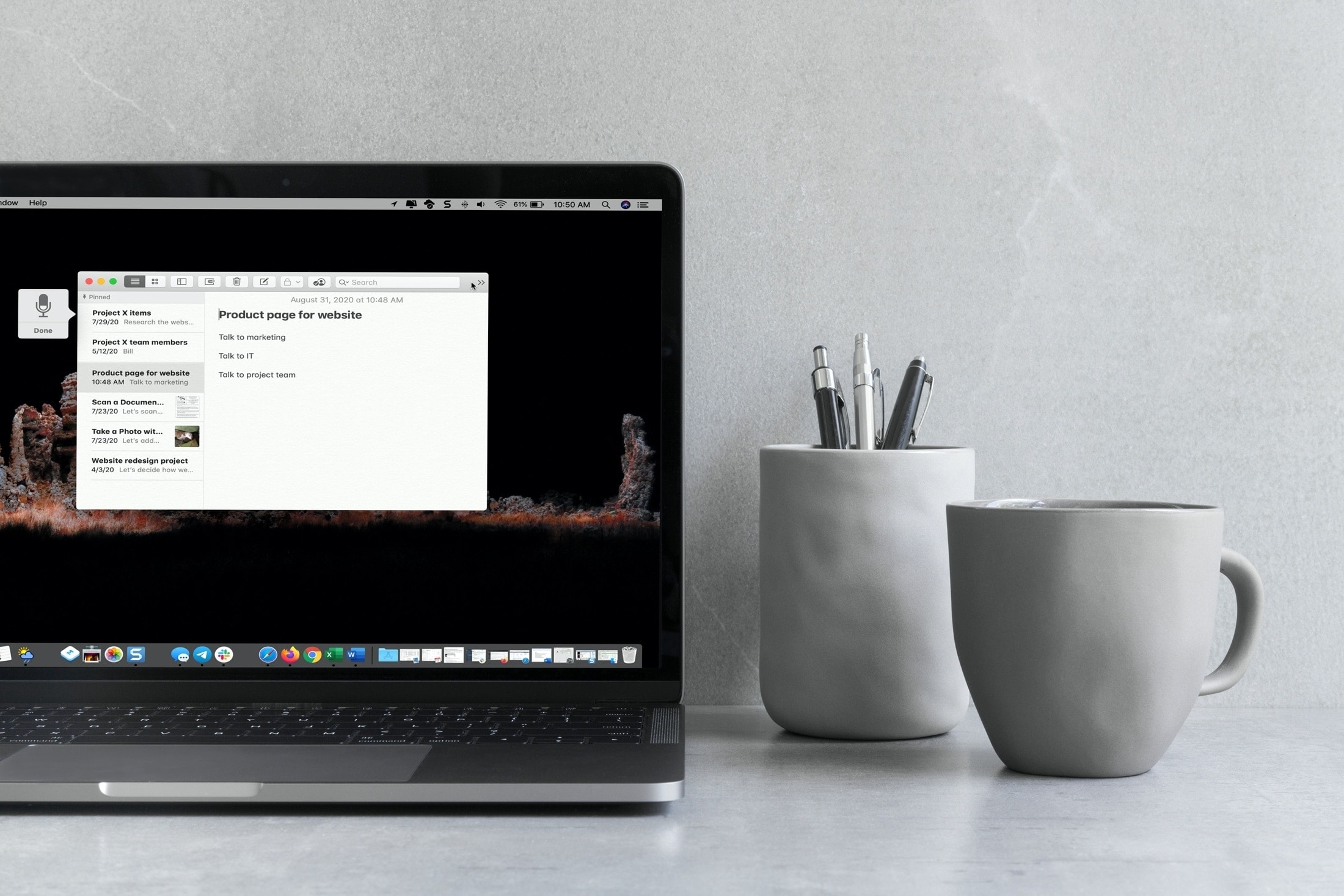

Your Mac offers a nice dictation feature for these times, and it’s easier to use than you probably think.

This guide will help you enable, use, and disable dictation on your Mac. So if you find yourself with an injury preventing you from typing or simply want to give dictation a try, here’s how to use it on Mac.

Sending your friends voice recordings on messenger platforms such as iMessage, WhatsApp or Threema is surely not everyone’s cup of tea, but it’s hard to overlook its rising popularity in certain circles. Be it for faster communications or text weariness amongst younger people, voice messages are rife in chats today and that is despite the one clear downside they have: unlike texts, they are not very discreet, which makes them basically unobtainable in a host of potential situations.

Understanding the (circumstantial) issues with voice messages, Apple were the first to offer voicemail transcriptions in iOS 10 and now Textify joins the cause to bring a similar service to an even wider audience. The speech recognition app provides spoken word-to-text transcriptions for all your favorite messenger platforms including iMessage, WhatsApp, Threema, and Line. And suffice it to say that it wouldn’t be on iDB if it was not surprisingly powerful at that. Here’s how it works.

Just how exactly does Siri learn a new language? In today's interview with Reuters, Apple's speech team head Alex Acero offered a behind-the-scenes look at how Siri is being taught new languages, a process that involves script-writing, capturing voices in multiple accents and dialects and using machine learning and artificial intelligence to build and evolve new language models over time. The system requires a team of people tasked with reading passages of manually transcribed text.

Before actually updating Siri, Apple first rolls out Dictation support for a new language.

Siri currently speaks 21 languages in 36 countries. By comparison, Microsoft's Cortana supports eight languages tailored for thirteen countries, Google Assistant speaks four languages while Amazon's Alexa works only in English and German.

OS X includes a nifty Dictation feature which allows you to control your Mac and apps with your voice. You can use “speakable items”, basically a set of spoken commands, to open apps, choose menu items, email contacts and convert whole spoken sentences to text, wherever you can type text.

This is much like iOS’s Dictation feature as both iOS and OS X use the same Nuance-powered technology that turns speech to text. iOS devices have limited computing power so the Dictation feature on the iPhone, iPod touch and iPad requires network connectivity in iOS 7 (iOS 8 supports streaming voice recognition and 22 new languages).

On the Mac, computing resources like CPU power, battery life and RAM are not of paramount importance as on mobile, Therefore, OS X Mavericks provides a new Enhanced Dictation feature which converts your words to text without utilizing Apple’s servers.

In other words, server-based Dictation lets you dictate without an active Internet connection. Because voice recognition processing runs locally on your Mac, text appears instantly as you speak. That is: continuos, streaming dictation with live feedback is made possible.

In this tutorial, I’m going to show you how to turn on Enhanced Dictation in OS X and take advantage of speech-to-text, even when you're off the grid...