In the past few years, it seems as if the unveiling of new phones has been accompanied by a new name in specification bragging rights. If you follow smartphone announcements or if you have seen any number of introductory headlines in passing, there is a fair chance you have heard the name DxOMark.

Smartphone makers often tout a numerical score as the best ever given by DxOMark, but what do these numbers mean? For the sake of this article, we will talk specifically about their process for testing mobile cameras.

Real metrics, subjective weight

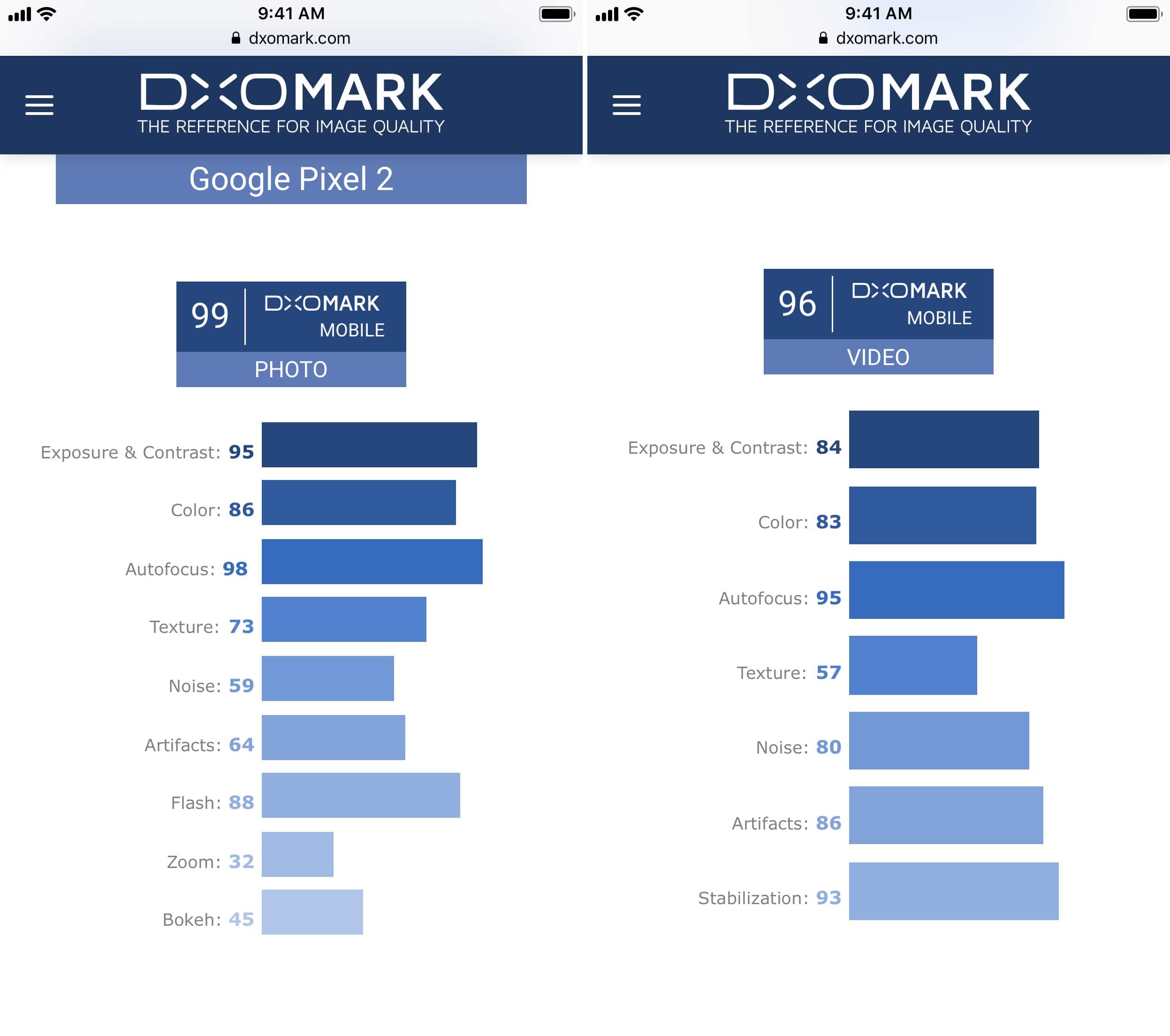

For their mobile scores, DxOMark generates an overall score from a variety of sub-scores. These sub-scores for still photos consist of exposure and contrast, color, autofocus, texture, noise, artifacts, flash, zoom, and bokeh. For video, the sub-scores are exposure and contrast, color, autofocus, texture, noise, artifacts, and stabilization.

Most of these sub-scores are self explanatory, but some may require a small part of explanation for the uninformed. The artifacts sub-score includes softness, distortion, vignetting, chromatic aberration, ringing, flare, ghosting, aliasing, and more.

You may ask, what do all of these sub-scores mean? Well, DxOMark tests mobile cameras in their default mode (not RAW) both objectively and subjectively, or, as DxOMark puts it, perceptual evaluation. Various photos are taken in a testing lab which includes what DxOMark calls test targets, lighting systems, light-boxes, light meters, telemeters, spectrometers, and more. Additionally, a total of over fifty tests are completed using “real-life” indoor and outdoor scenes.

While we do not have the full details on how the results of these tests are translated into numerical scores, there are a few important points to note about these scores. As you can see below, the total score is clearly not an average of the sub-scores. This is because DxOMark weights these sub-scores according to what they believe to be the most important factors in camera quality. The issue with this is that while the folks at DxOMark are certainly experts in this field, there is a certain level of subjectivity involved that must be noted and considered.

It is also important to note that DxOMark offers to work with camera manufactures in an effort to help better their image quality, for a fee. This does not necessarily mean corruption is inevitable, but it must be considered that these manufacturers may be more inclined to boost their DxOMark score than to improve image quality according to their own preferences.

This is not necessarily a bad thing. The folks at DxOMark are professionals, and their expertise and experience should not be ignored, but image quality consists of so many variables that the variety of possible personal opinions and preferences are innumerable. More simply put, there are bad photos and there are excellent photos, but these is no such thing as a perfect photo.

Recap

All in all, DxOMark scores are a generally helpful tool to have in monitoring the quality of smartphone cameras. However, I urge you to take these scores with a grain of salt and remember that while a great deal of image quality can be accounted for quantitatively, there are also multiple qualitative factors that must be considered as well.

If you feel insulted or disappointed that your favorite phone scored lower than another similar phone, remember that these scores are not set in stone, and that your personal preference is more valuable than a score decided by imperfect procedures.

So, next time you see a high DxOMark score for the next hot new phone, remember that while that camera is almost certainly an excellent camera, a high score is no guarantee of superlative camera quality.

What is your favorite smartphone camera? Let us know in the comments below.